In 10 Minutes, I'll Provide you with The Reality About What Is Chatgpt

페이지 정보

본문

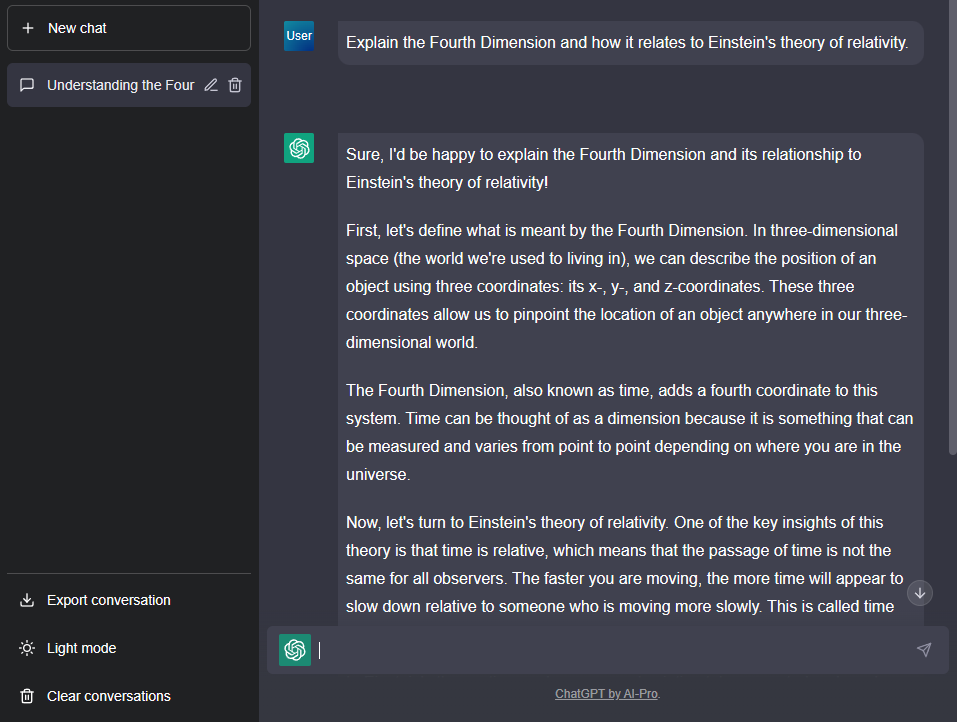

For instance, when a buyer calls or chats along with your virtual agent and asks a particular question, ChatGPT can reference this embedded knowledge in real time. So while you ask chatgpt gratis a question, it tries to provide you with the perfect reply it may well based mostly on what it’s discovered! ChatGPT refused to answer straight and stated that using slang names or derogatory phrases for any nationality may be offensive and disrespectful. ChatGPT was created using a state-of-the-art machine learning method called transformers, which allows the model to course of and perceive giant quantities of textual content knowledge. The encoder-decoder consideration is computed utilizing an analogous method because the self-attention mechanism, but with one key difference: the queries come from the decoder while the keys and values come from the encoder. Because it builds the sentence, it makes use of info from the encoder and what it has already generated. This mechanism enables the decoder to leverage the wealthy contextual embeddings generated by the encoder, ensuring that every generated word is informed by all the enter sequence. The decoder’s design enables it to consider beforehand generated words because it produces every new word, guaranteeing coherent and contextually relevant output. This ensures that solely the relevant previous phrases affect the prediction.

For instance, when a buyer calls or chats along with your virtual agent and asks a particular question, ChatGPT can reference this embedded knowledge in real time. So while you ask chatgpt gratis a question, it tries to provide you with the perfect reply it may well based mostly on what it’s discovered! ChatGPT refused to answer straight and stated that using slang names or derogatory phrases for any nationality may be offensive and disrespectful. ChatGPT was created using a state-of-the-art machine learning method called transformers, which allows the model to course of and perceive giant quantities of textual content knowledge. The encoder-decoder consideration is computed utilizing an analogous method because the self-attention mechanism, but with one key difference: the queries come from the decoder while the keys and values come from the encoder. Because it builds the sentence, it makes use of info from the encoder and what it has already generated. This mechanism enables the decoder to leverage the wealthy contextual embeddings generated by the encoder, ensuring that every generated word is informed by all the enter sequence. The decoder’s design enables it to consider beforehand generated words because it produces every new word, guaranteeing coherent and contextually relevant output. This ensures that solely the relevant previous phrases affect the prediction.

Layer normalization ensures the mannequin remains stable during training by normalizing the output of every layer to have a imply of 0 and variance of 1. This helps easy studying, making the model much less delicate to modifications in weight updates throughout backpropagation. Instead of performing consideration once, the model performs it eight times in parallel, every time with a special set of realized weight matrices. After the multi-head attention is applied, the mannequin passes the end result by means of a simple feed-ahead network to add more complexity and non-linearity. The residual connection helps with gradient movement throughout training by allowing gradients to bypass a number of layers. Unlike older fashions like RNNs, which handled words one after the other, the Transformer encodes every word at the same time. Considered one of the largest ethical concerns with ChatGPT is its bias in training data. These models are trained on huge amounts of text knowledge and might generate human-like responses to textual content inputs. Training data for the model consists of supplies like books, articles, and net pages. Additionally, like in the encoder, the decoder employs layer normalization and residual connections. In every layer of the encoder, residual connections (also known as skip connections) are added. This process distinguishes it from the encoder, which processes input in parallel.

The mainstream understanding sees socialization as a fancy, multifaceted process that may be studied, understood, and critiqued, whereas conspiracy theories often present a more monolithic and malicious interpretation with out substantive proof. This process allows the model to be taught and mix varied ranges of abstraction from the enter, making the mannequin more robust in understanding the sentence. This data permits marketers to optimize their content by incorporating these key phrases strategically. This mechanism allows every phrase in the input sentence to "look" at different words, and decide which ones are most related to it. While embeddings seize the which means of phrases, they do not preserve details about their order in the sentence. These tokens will be individual phrases, however they can be subwords or even characters, depending on the tokenization methodology used. Actually, AI doesn’t even want to realize human-stage intelligence to be just as succesful as any author or producer.

By now, millions of people have tried ChatGPT, but there's an even more superior model of this disruptive AI service referred to as ChatGPT Plus. This makes them more efficient and efficient for a wide range of NLP tasks, from machine translation to text summarization. Inspired by the groundbreaking analysis paper "Attention is All You Need," Transformers introduced a new approach that revolutionized NLP. By parallelizing the processing and leveraging self-consideration, Transformers have overcome the constraints of previous models. However, these models had limitations. The perils of trusting the knowledgeable in the machine, nonetheless, go far beyond whether or not AI-generated code is buggy or not. However, making probably the most of those tools can be a challenge. It is important to challenge false narratives and promote a extra correct and inclusive illustration of Jesus based on historical and cultural context. This feed-forward network operates independently on every phrase and helps the mannequin make more refined predictions after consideration has been applied.

If you cherished this posting and you would like to get much more info with regards to chat gpt gratis gpt es gratis (click here for more info) kindly stop by our webpage.

- 이전글[ExI] Another ChatGPT Session On Qualia 25.01.28

- 다음글Synthstuff - Music, Photography And More 25.01.28

댓글목록

등록된 댓글이 없습니다.