Deepseek Secrets

페이지 정보

본문

DeepSeek Chat has two variants of 7B and 67B parameters, which are skilled on a dataset of 2 trillion tokens, says the maker. Trying multi-agent setups. I having another LLM that may correct the primary ones mistakes, ديب سيك or enter into a dialogue the place two minds reach a better end result is totally potential. The first model, @hf/thebloke/deepseek-coder-6.7b-base-awq, generates pure language steps for data insertion. Now, here is how one can extract structured information from LLM responses. There’s no straightforward reply to any of this - everyone (myself included) wants to determine their very own morality and strategy right here. The Mixture-of-Experts (MoE) strategy utilized by the mannequin is vital to its performance. Xin believes that synthetic data will play a key role in advancing LLMs. The important thing innovation in this work is using a novel optimization technique known as Group Relative Policy Optimization (GRPO), which is a variant of the Proximal Policy Optimization (PPO) algorithm.

DeepSeek Chat has two variants of 7B and 67B parameters, which are skilled on a dataset of 2 trillion tokens, says the maker. Trying multi-agent setups. I having another LLM that may correct the primary ones mistakes, ديب سيك or enter into a dialogue the place two minds reach a better end result is totally potential. The first model, @hf/thebloke/deepseek-coder-6.7b-base-awq, generates pure language steps for data insertion. Now, here is how one can extract structured information from LLM responses. There’s no straightforward reply to any of this - everyone (myself included) wants to determine their very own morality and strategy right here. The Mixture-of-Experts (MoE) strategy utilized by the mannequin is vital to its performance. Xin believes that synthetic data will play a key role in advancing LLMs. The important thing innovation in this work is using a novel optimization technique known as Group Relative Policy Optimization (GRPO), which is a variant of the Proximal Policy Optimization (PPO) algorithm.

These GPTQ fashions are known to work in the next inference servers/webuis. Instruction Following Evaluation: On Nov 15th, 2023, Google launched an instruction following evaluation dataset. Listen to this story an organization based mostly in China which goals to "unravel the thriller of AGI with curiosity has launched DeepSeek LLM, a 67 billion parameter mannequin skilled meticulously from scratch on a dataset consisting of two trillion tokens. Step 3: Instruction Fine-tuning on 2B tokens of instruction information, resulting in instruction-tuned fashions (DeepSeek-Coder-Instruct). Although the deepseek-coder-instruct fashions are not specifically educated for code completion duties during supervised superb-tuning (SFT), they retain the potential to carry out code completion effectively. Ollama is essentially, docker for LLM models and permits us to quickly run various LLM’s and host them over normal completion APIs domestically. The benchmark involves synthetic API function updates paired with program synthesis examples that use the up to date performance, with the purpose of testing whether or not an LLM can resolve these examples without being supplied the documentation for the updates. Batches of account details had been being bought by a drug cartel, who linked the consumer accounts to easily obtainable personal particulars (like addresses) to facilitate anonymous transactions, allowing a big quantity of funds to maneuver across worldwide borders with out leaving a signature.

These GPTQ fashions are known to work in the next inference servers/webuis. Instruction Following Evaluation: On Nov 15th, 2023, Google launched an instruction following evaluation dataset. Listen to this story an organization based mostly in China which goals to "unravel the thriller of AGI with curiosity has launched DeepSeek LLM, a 67 billion parameter mannequin skilled meticulously from scratch on a dataset consisting of two trillion tokens. Step 3: Instruction Fine-tuning on 2B tokens of instruction information, resulting in instruction-tuned fashions (DeepSeek-Coder-Instruct). Although the deepseek-coder-instruct fashions are not specifically educated for code completion duties during supervised superb-tuning (SFT), they retain the potential to carry out code completion effectively. Ollama is essentially, docker for LLM models and permits us to quickly run various LLM’s and host them over normal completion APIs domestically. The benchmark involves synthetic API function updates paired with program synthesis examples that use the up to date performance, with the purpose of testing whether or not an LLM can resolve these examples without being supplied the documentation for the updates. Batches of account details had been being bought by a drug cartel, who linked the consumer accounts to easily obtainable personal particulars (like addresses) to facilitate anonymous transactions, allowing a big quantity of funds to maneuver across worldwide borders with out leaving a signature.

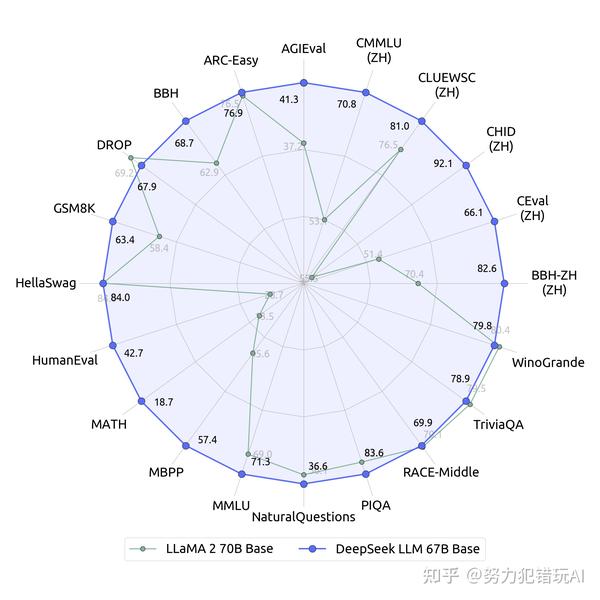

To entry an internet-served AI system, a user should either log-in via one of those platforms or associate their particulars with an account on one of these platforms. Evaluation particulars are here. The DeepSeek v3 paper (and are out, after yesterday's mysterious release of Loads of attention-grabbing details in here. It provides a header immediate, based on the guidance from the paper. In comparison with Meta’s Llama3.1 (405 billion parameters used unexpectedly), DeepSeek V3 is over 10 times extra efficient but performs higher. People who tested the 67B-parameter assistant said the tool had outperformed Meta’s Llama 2-70B - the present finest we've within the LLM market. It offers the LLM context on venture/repository related files. The plugin not only pulls the present file, but additionally masses all of the currently open files in Vscode into the LLM context. I created a VSCode plugin that implements these strategies, and is ready to interact with Ollama running locally.

Note: Unlike copilot, we’ll deal with regionally running LLM’s. This must be interesting to any developers working in enterprises which have information privacy and sharing concerns, but still need to enhance their developer productiveness with locally running fashions. In DeepSeek you just have two - DeepSeek-V3 is the default and in order for you to use its advanced reasoning mannequin you have to faucet or click on the 'DeepThink (R1)' button before entering your prompt. Applications that require facility in both math and language may benefit by switching between the two. Understanding Cloudflare Workers: I began by researching how to use Cloudflare Workers and Hono for serverless applications. The principle advantage of using Cloudflare Workers over one thing like GroqCloud is their large number of fashions. By 2019, he established High-Flyer as a hedge fund focused on creating and utilizing A.I. DeepSeek-V3 collection (including Base and Chat) supports business use. In December 2024, they launched a base model DeepSeek-V3-Base and a chat mannequin DeepSeek-V3.

If you have any type of concerns regarding where and the best ways to utilize ديب سيك, you could call us at our own site.

- 이전글9 Odd-Ball Tips About What Was 8 Months Ago From Today 25.01.31

- 다음글10 Windows Seal Replacement Techniques All Experts Recommend 25.01.31

댓글목록

등록된 댓글이 없습니다.