Unknown Facts About Deepseek Made Known

페이지 정보

본문

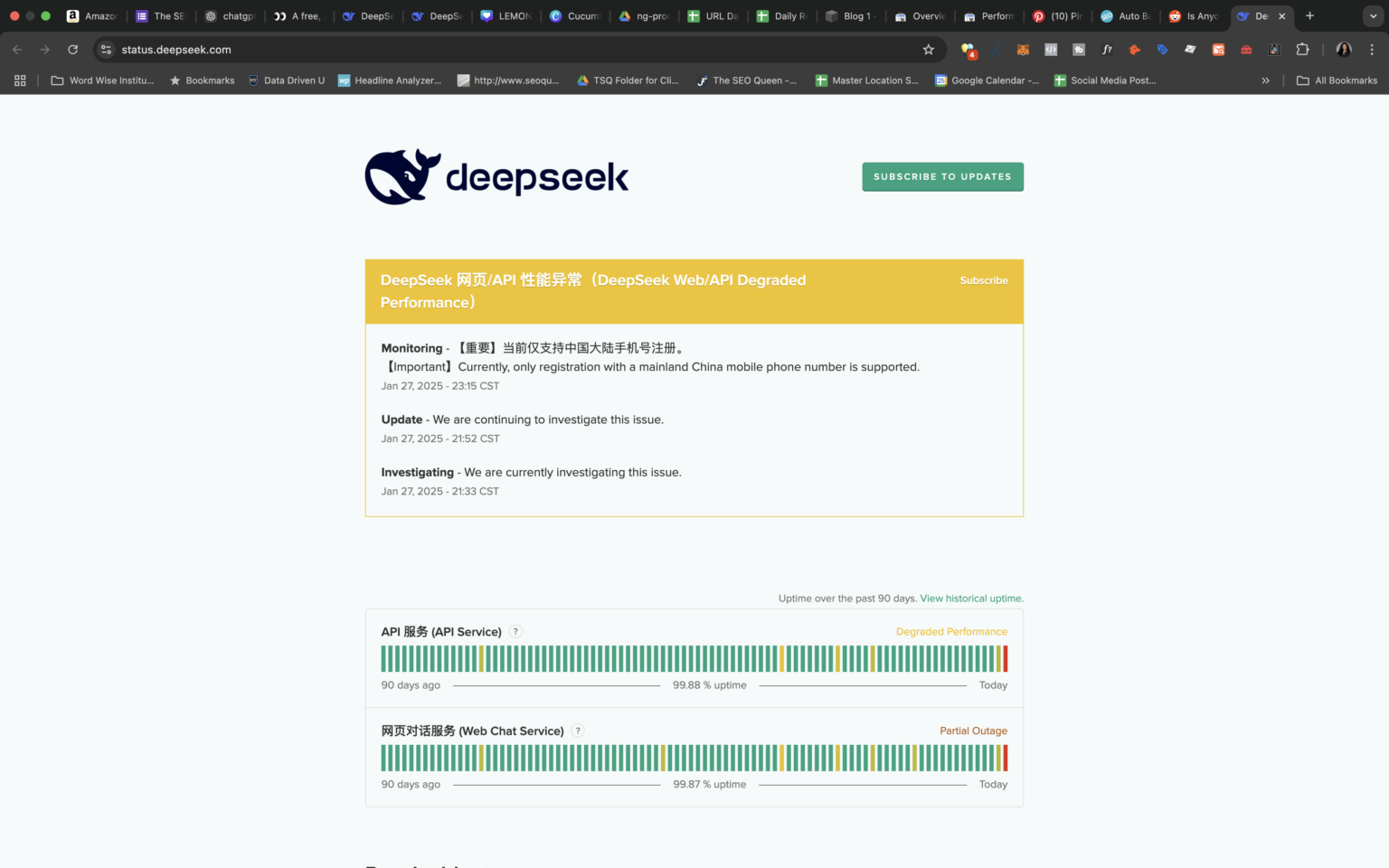

Anyone managed to get DeepSeek API working? The open source generative AI movement can be tough to stay atop of - even for these working in or masking the sphere akin to us journalists at VenturBeat. Among open fashions, we've seen CommandR, DBRX, Phi-3, Yi-1.5, Qwen2, deepseek ai china v2, Mistral (NeMo, Large), Gemma 2, Llama 3, Nemotron-4. I hope that additional distillation will occur and we'll get great and succesful models, good instruction follower in vary 1-8B. To this point fashions below 8B are way too fundamental in comparison with larger ones. Yet wonderful tuning has too high entry point in comparison with easy API entry and immediate engineering. I do not pretend to grasp the complexities of the fashions and the relationships they're educated to form, however the truth that highly effective fashions could be skilled for a reasonable quantity (compared to OpenAI elevating 6.6 billion dollars to do some of the identical work) is attention-grabbing.

Anyone managed to get DeepSeek API working? The open source generative AI movement can be tough to stay atop of - even for these working in or masking the sphere akin to us journalists at VenturBeat. Among open fashions, we've seen CommandR, DBRX, Phi-3, Yi-1.5, Qwen2, deepseek ai china v2, Mistral (NeMo, Large), Gemma 2, Llama 3, Nemotron-4. I hope that additional distillation will occur and we'll get great and succesful models, good instruction follower in vary 1-8B. To this point fashions below 8B are way too fundamental in comparison with larger ones. Yet wonderful tuning has too high entry point in comparison with easy API entry and immediate engineering. I do not pretend to grasp the complexities of the fashions and the relationships they're educated to form, however the truth that highly effective fashions could be skilled for a reasonable quantity (compared to OpenAI elevating 6.6 billion dollars to do some of the identical work) is attention-grabbing.

There’s a fair amount of discussion. Run DeepSeek-R1 Locally at no cost in Just three Minutes! It compelled DeepSeek’s home competition, together with ByteDance and Alibaba, to chop the utilization prices for some of their models, and make others utterly free. In order for you to trace whoever has 5,000 GPUs in your cloud so you have got a way of who's capable of coaching frontier fashions, that’s relatively simple to do. The promise and edge of LLMs is the pre-educated state - no want to collect and label information, spend time and money coaching personal specialised models - just immediate the LLM. It’s to actually have very massive manufacturing in NAND or not as cutting edge manufacturing. I very much might figure it out myself if needed, however it’s a transparent time saver to instantly get a correctly formatted CLI invocation. I’m trying to figure out the suitable incantation to get it to work with Discourse. There will likely be bills to pay and proper now it does not appear like it'll be corporations. Every time I read a publish about a brand new model there was a statement comparing evals to and difficult fashions from OpenAI.

There’s a fair amount of discussion. Run DeepSeek-R1 Locally at no cost in Just three Minutes! It compelled DeepSeek’s home competition, together with ByteDance and Alibaba, to chop the utilization prices for some of their models, and make others utterly free. In order for you to trace whoever has 5,000 GPUs in your cloud so you have got a way of who's capable of coaching frontier fashions, that’s relatively simple to do. The promise and edge of LLMs is the pre-educated state - no want to collect and label information, spend time and money coaching personal specialised models - just immediate the LLM. It’s to actually have very massive manufacturing in NAND or not as cutting edge manufacturing. I very much might figure it out myself if needed, however it’s a transparent time saver to instantly get a correctly formatted CLI invocation. I’m trying to figure out the suitable incantation to get it to work with Discourse. There will likely be bills to pay and proper now it does not appear like it'll be corporations. Every time I read a publish about a brand new model there was a statement comparing evals to and difficult fashions from OpenAI.

The model was skilled on 2,788,000 H800 GPU hours at an estimated value of $5,576,000. KoboldCpp, a fully featured net UI, with GPU accel throughout all platforms and GPU architectures. Llama 3.1 405B trained 30,840,000 GPU hours-11x that used by DeepSeek v3, for a model that benchmarks slightly worse. Notice how 7-9B fashions come near or surpass the scores of GPT-3.5 - the King mannequin behind the ChatGPT revolution. I'm a skeptic, especially due to the copyright and environmental issues that come with creating and operating these companies at scale. A welcome results of the elevated efficiency of the models-both the hosted ones and the ones I can run locally-is that the vitality utilization and environmental influence of running a immediate has dropped enormously over the previous couple of years. Depending on how much VRAM you may have on your machine, you might be capable to benefit from Ollama’s ability to run a number of fashions and handle a number of concurrent requests through the use of DeepSeek Coder 6.7B for autocomplete and Llama three 8B for chat.

We launch the DeepSeek LLM 7B/67B, including both base and chat models, to the public. Since launch, we’ve additionally gotten confirmation of the ChatBotArena ranking that locations them in the highest 10 and over the likes of recent Gemini professional fashions, Grok 2, o1-mini, etc. With only 37B energetic parameters, this is extremely interesting for many enterprise purposes. I'm not going to start using an LLM daily, but studying Simon during the last 12 months is helping me think critically. Alessio Fanelli: Yeah. And I believe the opposite big thing about open source is retaining momentum. I think the final paragraph is the place I'm still sticking. The topic started because somebody asked whether or not he still codes - now that he's a founding father of such a large firm. Here’s everything it's essential know about Deepseek’s V3 and R1 fashions and why the corporate may fundamentally upend America’s AI ambitions. Models converge to the same ranges of performance judging by their evals. All of that means that the models' efficiency has hit some pure restrict. The expertise of LLMs has hit the ceiling with no clear reply as to whether the $600B funding will ever have affordable returns. Censorship regulation and implementation in China’s main models have been efficient in restricting the range of attainable outputs of the LLMs with out suffocating their capability to reply open-ended questions.

If you want to learn more in regards to deep seek look into our own site.

- 이전글Deepseek It! Lessons From The Oscars 25.02.01

- 다음글تفسير البحر المحيط أبي حيان الغرناطي/سورة غافر 25.02.01

댓글목록

등록된 댓글이 없습니다.