If you'd like To be Successful In Deepseek, Listed here Are 5 Invaluab…

페이지 정보

본문

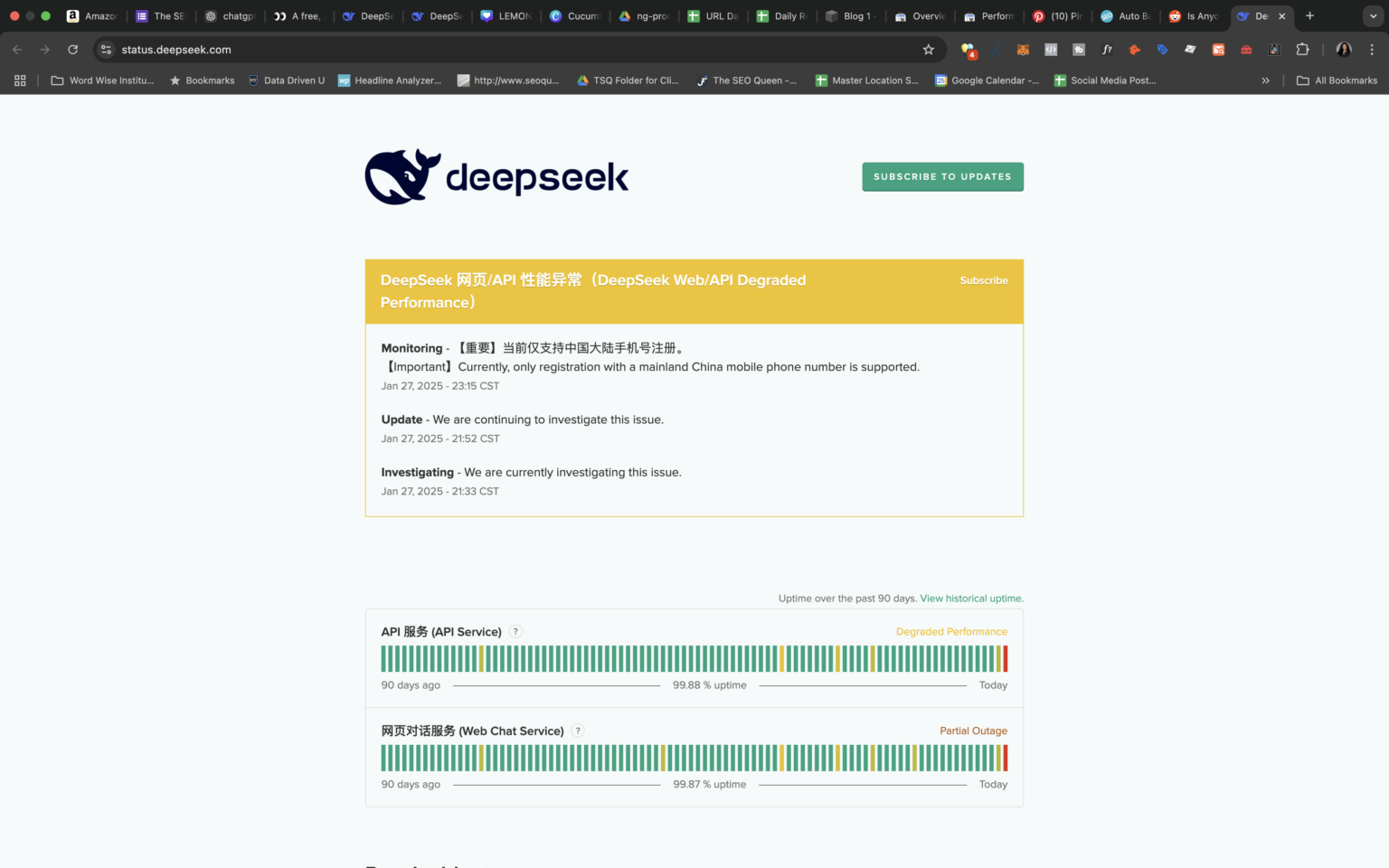

For this fun check, DeepSeek was actually comparable to its greatest-recognized US competitor. "Time will inform if the DeepSeek threat is real - the race is on as to what know-how works and the way the massive Western gamers will reply and evolve," Michael Block, market strategist at Third Seven Capital, instructed CNN. If a Chinese startup can build an AI mannequin that works simply in addition to OpenAI’s newest and biggest, and do so in beneath two months and for lower than $6 million, then what use is Sam Altman anymore? Can DeepSeek Coder be used for industrial purposes? DeepSeek-R1 series help commercial use, allow for any modifications and derivative works, together with, however not limited to, distillation for coaching different LLMs. From the outset, it was free deepseek for commercial use and totally open-supply. DeepSeek has grow to be essentially the most downloaded free app within the US simply a week after it was launched. Later, on November 29, 2023, DeepSeek launched DeepSeek LLM, described because the "next frontier of open-supply LLMs," scaled as much as 67B parameters.

For this fun check, DeepSeek was actually comparable to its greatest-recognized US competitor. "Time will inform if the DeepSeek threat is real - the race is on as to what know-how works and the way the massive Western gamers will reply and evolve," Michael Block, market strategist at Third Seven Capital, instructed CNN. If a Chinese startup can build an AI mannequin that works simply in addition to OpenAI’s newest and biggest, and do so in beneath two months and for lower than $6 million, then what use is Sam Altman anymore? Can DeepSeek Coder be used for industrial purposes? DeepSeek-R1 series help commercial use, allow for any modifications and derivative works, together with, however not limited to, distillation for coaching different LLMs. From the outset, it was free deepseek for commercial use and totally open-supply. DeepSeek has grow to be essentially the most downloaded free app within the US simply a week after it was launched. Later, on November 29, 2023, DeepSeek launched DeepSeek LLM, described because the "next frontier of open-supply LLMs," scaled as much as 67B parameters.

That call was definitely fruitful, and now the open-source family of fashions, including deepseek ai china Coder, DeepSeek LLM, DeepSeekMoE, DeepSeek-Coder-V1.5, DeepSeekMath, DeepSeek-VL, DeepSeek-V2, DeepSeek-Coder-V2, and DeepSeek-Prover-V1.5, might be utilized for a lot of purposes and is democratizing the utilization of generative fashions. Along with DeepSeek’s R1 mannequin being able to clarify its reasoning, it relies on an open-source household of fashions that may be accessed on GitHub. OpenAI, DeepSeek’s closest U.S. That is why the world’s most powerful models are both made by huge company behemoths like Facebook and Google, or by startups that have raised unusually large quantities of capital (OpenAI, Anthropic, XAI). Why is DeepSeek so significant? "I wouldn't be shocked to see the DOD embrace open-supply American reproductions of DeepSeek and Qwen," Gupta mentioned. See the 5 capabilities on the core of this course of. We attribute the state-of-the-art efficiency of our fashions to: (i) largescale pretraining on a big curated dataset, which is particularly tailored to understanding humans, (ii) scaled highresolution and excessive-capability imaginative and prescient transformer backbones, and (iii) excessive-quality annotations on augmented studio and synthetic knowledge," Facebook writes. Later in March 2024, DeepSeek tried their hand at imaginative and prescient models and launched DeepSeek-VL for top-high quality imaginative and prescient-language understanding. In February 2024, deepseek ai china introduced a specialised model, DeepSeekMath, with 7B parameters.

That call was definitely fruitful, and now the open-source family of fashions, including deepseek ai china Coder, DeepSeek LLM, DeepSeekMoE, DeepSeek-Coder-V1.5, DeepSeekMath, DeepSeek-VL, DeepSeek-V2, DeepSeek-Coder-V2, and DeepSeek-Prover-V1.5, might be utilized for a lot of purposes and is democratizing the utilization of generative fashions. Along with DeepSeek’s R1 mannequin being able to clarify its reasoning, it relies on an open-source household of fashions that may be accessed on GitHub. OpenAI, DeepSeek’s closest U.S. That is why the world’s most powerful models are both made by huge company behemoths like Facebook and Google, or by startups that have raised unusually large quantities of capital (OpenAI, Anthropic, XAI). Why is DeepSeek so significant? "I wouldn't be shocked to see the DOD embrace open-supply American reproductions of DeepSeek and Qwen," Gupta mentioned. See the 5 capabilities on the core of this course of. We attribute the state-of-the-art efficiency of our fashions to: (i) largescale pretraining on a big curated dataset, which is particularly tailored to understanding humans, (ii) scaled highresolution and excessive-capability imaginative and prescient transformer backbones, and (iii) excessive-quality annotations on augmented studio and synthetic knowledge," Facebook writes. Later in March 2024, DeepSeek tried their hand at imaginative and prescient models and launched DeepSeek-VL for top-high quality imaginative and prescient-language understanding. In February 2024, deepseek ai china introduced a specialised model, DeepSeekMath, with 7B parameters.

Ritwik Gupta, who with several colleagues wrote one of the seminal papers on building smaller AI fashions that produce massive results, cautioned that much of the hype round DeepSeek reveals a misreading of precisely what it's, which he described as "still a big model," with 671 billion parameters. We current DeepSeek-V3, a strong Mixture-of-Experts (MoE) language mannequin with 671B whole parameters with 37B activated for every token. Capabilities: Mixtral is a classy AI mannequin using a Mixture of Experts (MoE) architecture. Their revolutionary approaches to consideration mechanisms and the Mixture-of-Experts (MoE) approach have led to spectacular effectivity good points. He told Defense One: "DeepSeek is a superb AI advancement and a perfect example of Test Time Scaling," a method that increases computing energy when the model is taking in data to produce a new consequence. "DeepSeek challenges the concept that bigger scale fashions are all the time extra performative, which has important implications given the safety and privateness vulnerabilities that include building AI fashions at scale," Khlaaf said.

"DeepSeek V2.5 is the precise best performing open-supply model I’ve tested, inclusive of the 405B variants," he wrote, additional underscoring the model’s potential. And it could also be helpful for a Defense Department tasked with capturing the best AI capabilities while concurrently reining in spending. DeepSeek’s performance-insofar as it shows what is possible-will give the Defense Department more leverage in its discussions with industry, and permit the division to search out extra opponents. DeepSeek's claim that its R1 synthetic intelligence (AI) model was made at a fraction of the cost of its rivals has raised questions about the long run about of the entire industry, and brought about some the world's greatest companies to sink in value. For normal questions and discussions, please use GitHub Discussions. A general use mannequin that combines advanced analytics capabilities with a vast thirteen billion parameter count, enabling it to carry out in-depth data evaluation and help complex choice-making processes. OpenAI and its partners just announced a $500 billion Project Stargate initiative that will drastically accelerate the development of inexperienced energy utilities and AI data centers throughout the US. It’s a analysis project. High throughput: DeepSeek V2 achieves a throughput that is 5.76 times larger than DeepSeek 67B. So it’s able to producing textual content at over 50,000 tokens per second on commonplace hardware.

Should you have virtually any queries with regards to wherever along with how you can employ deep seek, you can email us from the web-site.

- 이전글경기 시알리스 구매 【 vceE.top 】 25.02.01

- 다음글شركة تركيب زجاج سيكوريت بالرياض 25.02.01

댓글목록

등록된 댓글이 없습니다.