The Preferred Deepseek

페이지 정보

본문

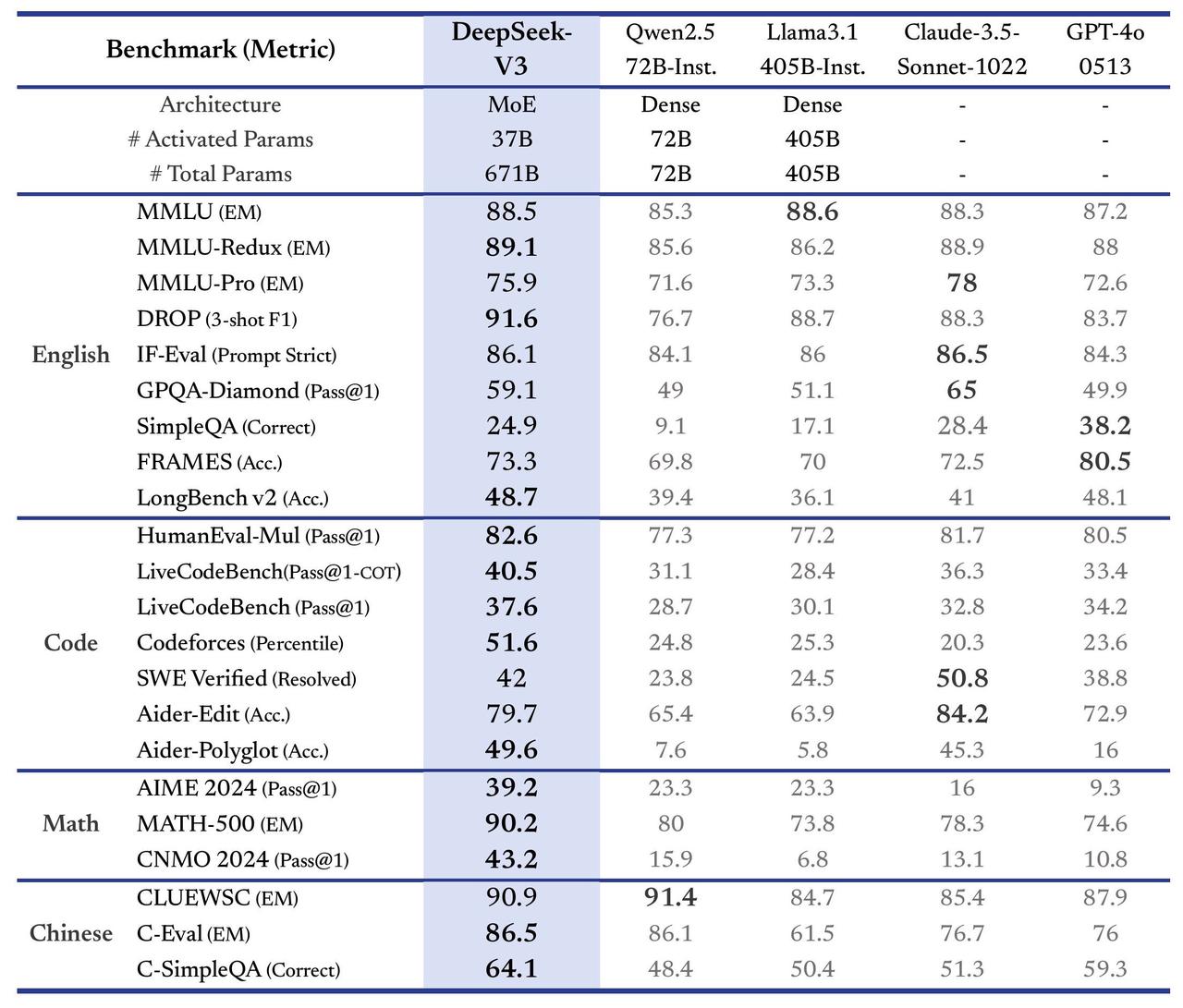

DeepSeek said it used just 2,048 Nvidia H800 graphics cards and spent $5.6mn to train its V3 model with 671bn parameters, a fraction of what OpenAI and Google spent to train comparably sized models. To date, the CAC has greenlighted models similar to Baichuan and Qianwen, which do not have security protocols as complete as DeepSeek. The examine additionally means that the regime’s censorship tactics characterize a strategic resolution balancing political safety and the goals of technological development. Even so, LLM growth is a nascent and rapidly evolving field - in the long run, it's uncertain whether Chinese developers can have the hardware capability and talent pool to surpass their US counterparts. Even so, key phrase filters limited their potential to reply delicate questions. The output quality of Qianwen and Baichuan also approached ChatGPT4 for questions that didn’t touch on sensitive topics - especially for his or her responses in English. And when you suppose these types of questions deserve extra sustained evaluation, and you work at a philanthropy or analysis organization keen on understanding China and AI from the models on up, please attain out!

DeepSeek said it used just 2,048 Nvidia H800 graphics cards and spent $5.6mn to train its V3 model with 671bn parameters, a fraction of what OpenAI and Google spent to train comparably sized models. To date, the CAC has greenlighted models similar to Baichuan and Qianwen, which do not have security protocols as complete as DeepSeek. The examine additionally means that the regime’s censorship tactics characterize a strategic resolution balancing political safety and the goals of technological development. Even so, LLM growth is a nascent and rapidly evolving field - in the long run, it's uncertain whether Chinese developers can have the hardware capability and talent pool to surpass their US counterparts. Even so, key phrase filters limited their potential to reply delicate questions. The output quality of Qianwen and Baichuan also approached ChatGPT4 for questions that didn’t touch on sensitive topics - especially for his or her responses in English. And when you suppose these types of questions deserve extra sustained evaluation, and you work at a philanthropy or analysis organization keen on understanding China and AI from the models on up, please attain out!

Is China a rustic with the rule of legislation or is it a rustic with rule by regulation? A: China is a socialist nation dominated by law. A: China is commonly called a "rule of law" fairly than a "rule by law" nation. Once we requested the Baichuan internet model the identical question in English, nevertheless, it gave us a response that both properly explained the difference between the "rule of law" and "rule by law" and asserted that China is a rustic with rule by law. While the Chinese government maintains that the PRC implements the socialist "rule of law," Western students have commonly criticized the PRC as a rustic with "rule by law" as a result of lack of judiciary independence. But beneath all of this I've a way of lurking horror - AI techniques have got so useful that the thing that may set humans aside from each other isn't specific onerous-gained expertise for using AI programs, however fairly just having a excessive degree of curiosity and agency. The truth is, the health care techniques in many countries are designed to make sure that each one people are handled equally for medical care, no matter their revenue.

Is China a rustic with the rule of legislation or is it a rustic with rule by regulation? A: China is a socialist nation dominated by law. A: China is commonly called a "rule of law" fairly than a "rule by law" nation. Once we requested the Baichuan internet model the identical question in English, nevertheless, it gave us a response that both properly explained the difference between the "rule of law" and "rule by law" and asserted that China is a rustic with rule by law. While the Chinese government maintains that the PRC implements the socialist "rule of law," Western students have commonly criticized the PRC as a rustic with "rule by law" as a result of lack of judiciary independence. But beneath all of this I've a way of lurking horror - AI techniques have got so useful that the thing that may set humans aside from each other isn't specific onerous-gained expertise for using AI programs, however fairly just having a excessive degree of curiosity and agency. The truth is, the health care techniques in many countries are designed to make sure that each one people are handled equally for medical care, no matter their revenue.

Based on these information, I agree that a rich individual is entitled to better medical services in the event that they pay a premium for them. Why this matters - synthetic data is working all over the place you look: Zoom out and Agent Hospital is one other example of how we can bootstrap the performance of AI techniques by rigorously mixing artificial knowledge (affected person and medical skilled personas and behaviors) and real knowledge (medical data). It's an open-source framework providing a scalable method to learning multi-agent systems' cooperative behaviours and capabilities. In tests, they find that language fashions like GPT 3.5 and 4 are already able to construct cheap biological protocols, representing further proof that today’s AI systems have the power to meaningfully automate and accelerate scientific experimentation. Overall, Qianwen and Baichuan are most more likely to generate answers that align with free-market and liberal principles on Hugging Face and in English. Overall, ChatGPT gave one of the best solutions - however we’re still impressed by the level of "thoughtfulness" that Chinese chatbots display. Cody is constructed on mannequin interoperability and we intention to supply entry to one of the best and latest models, and at this time we’re making an replace to the default models offered to Enterprise prospects.

DeepSeek Coder models are trained with a 16,000 token window measurement and an extra fill-in-the-blank process to allow challenge-level code completion and infilling. Copilot has two parts right this moment: code completion and "chat". A common use case is to complete the code for the person after they provide a descriptive comment. They provide an API to use their new LPUs with plenty of open source LLMs (including Llama 3 8B and 70B) on their GroqCloud platform. The aim of this put up is to deep seek-dive into LLM’s which might be specialised in code generation duties, and see if we can use them to write code. This disparity could possibly be attributed to their training data: English and Chinese discourses are influencing the coaching knowledge of those fashions. One is the variations in their training information: it is possible that DeepSeek is skilled on extra Beijing-aligned knowledge than Qianwen and Baichuan. The next coaching levels after pre-coaching require solely 0.1M GPU hours. DeepSeek’s language models, designed with architectures akin to LLaMA, underwent rigorous pre-coaching.

- 이전글What Are The Biggest "Myths" About Car Key Cut Could Be True 25.02.01

- 다음글شركة تركيب زجاج سيكوريت بالرياض 25.02.01

댓글목록

등록된 댓글이 없습니다.