59% Of The Market Is Inquisitive about Deepseek

페이지 정보

본문

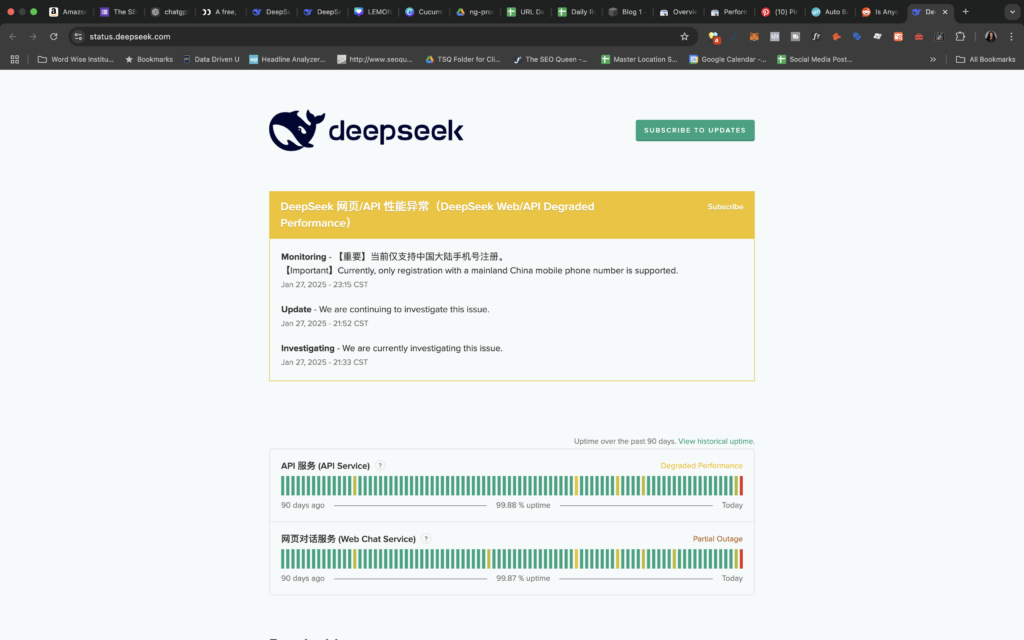

DeepSeek presents AI of comparable high quality to ChatGPT however is totally free to use in chatbot form. The actually disruptive factor is that we must set ethical tips to ensure the constructive use of AI. To practice the mannequin, we would have liked a suitable problem set (the given "training set" of this competitors is simply too small for effective-tuning) with "ground truth" solutions in ToRA format for supervised effective-tuning. But I also read that should you specialize fashions to do less you can also make them great at it this led me to "codegpt/deepseek-coder-1.3b-typescript", this specific model is very small when it comes to param count and it's also primarily based on a deepseek-coder model but then it is fine-tuned utilizing solely typescript code snippets. If your machine doesn’t support these LLM’s well (except you will have an M1 and above, you’re in this category), then there is the next different answer I’ve discovered. Ollama is actually, ديب سيك مجانا docker for LLM models and allows us to shortly run varied LLM’s and host them over commonplace completion APIs domestically. On 9 January 2024, they launched 2 DeepSeek-MoE fashions (Base, Chat), each of 16B parameters (2.7B activated per token, 4K context length). On 27 January 2025, DeepSeek restricted its new person registration to Chinese mainland cellphone numbers, e mail, and Google login after a cyberattack slowed its servers.

DeepSeek presents AI of comparable high quality to ChatGPT however is totally free to use in chatbot form. The actually disruptive factor is that we must set ethical tips to ensure the constructive use of AI. To practice the mannequin, we would have liked a suitable problem set (the given "training set" of this competitors is simply too small for effective-tuning) with "ground truth" solutions in ToRA format for supervised effective-tuning. But I also read that should you specialize fashions to do less you can also make them great at it this led me to "codegpt/deepseek-coder-1.3b-typescript", this specific model is very small when it comes to param count and it's also primarily based on a deepseek-coder model but then it is fine-tuned utilizing solely typescript code snippets. If your machine doesn’t support these LLM’s well (except you will have an M1 and above, you’re in this category), then there is the next different answer I’ve discovered. Ollama is actually, ديب سيك مجانا docker for LLM models and allows us to shortly run varied LLM’s and host them over commonplace completion APIs domestically. On 9 January 2024, they launched 2 DeepSeek-MoE fashions (Base, Chat), each of 16B parameters (2.7B activated per token, 4K context length). On 27 January 2025, DeepSeek restricted its new person registration to Chinese mainland cellphone numbers, e mail, and Google login after a cyberattack slowed its servers.

Lastly, ought to leading American academic institutions continue the extraordinarily intimate collaborations with researchers associated with the Chinese government? From what I've read, the primary driver of the fee savings was by bypassing costly human labor prices associated with supervised coaching. These chips are pretty massive and each NVidia and AMD need to recoup engineering costs. So is NVidia going to lower costs because of FP8 coaching prices? DeepSeek demonstrates that competitive fashions 1) do not need as a lot hardware to practice or infer, 2) will be open-sourced, and 3) can make the most of hardware apart from NVIDIA (in this case, AMD). With the flexibility to seamlessly integrate a number of APIs, together with OpenAI, Groq Cloud, and Cloudflare Workers AI, I have been able to unlock the full potential of those highly effective AI models. Multiple different quantisation codecs are supplied, and most customers only need to select and obtain a single file. Regardless of how a lot cash we spend, ultimately, the benefits go to the frequent customers.

In short, DeepSeek feels very much like ChatGPT with out all the bells and whistles. That's not much that I've discovered. Real world check: They examined out GPT 3.5 and GPT4 and located that GPT4 - when outfitted with instruments like retrieval augmented knowledge era to access documentation - succeeded and "generated two new protocols using pseudofunctions from our database. In 2023, High-Flyer began DeepSeek as a lab devoted to researching AI instruments separate from its financial business. It addresses the constraints of earlier approaches by decoupling visual encoding into separate pathways, while still utilizing a single, unified transformer structure for processing. The decoupling not only alleviates the conflict between the visual encoder’s roles in understanding and generation, but in addition enhances the framework’s flexibility. Janus-Pro is a unified understanding and technology MLLM, which decouples visible encoding for multimodal understanding and technology. Janus-Pro is a novel autoregressive framework that unifies multimodal understanding and generation. Janus-Pro is constructed based mostly on the DeepSeek-LLM-1.5b-base/DeepSeek-LLM-7b-base. Janus-Pro surpasses previous unified mannequin and matches or exceeds the performance of activity-specific models. AI’s future isn’t in who builds one of the best fashions or applications; it’s in who controls the computational bottleneck.

Given the above greatest practices on how to provide the model its context, and the prompt engineering methods that the authors steered have optimistic outcomes on result. The original GPT-four was rumored to have round 1.7T params. From 1 and 2, it's best to now have a hosted LLM mannequin operating. By incorporating 20 million Chinese a number of-selection questions, DeepSeek LLM 7B Chat demonstrates improved scores in MMLU, C-Eval, and CMMLU. If we select to compete we are able to still win, and, if we do, we can have a Chinese company to thank. We could, for very logical causes, double down on defensive measures, like massively expanding the chip ban and imposing a permission-based regulatory regime on chips and semiconductor equipment that mirrors the E.U.’s strategy to tech; alternatively, we might understand that we have actual competition, and really give ourself permission to compete. I mean, it's not like they found a car.

If you adored this information and you would such as to get even more info concerning deep seek kindly see our own web-page.

- 이전글See What Buy Genuine Driving Licence UK Tricks The Celebs Are Using 25.02.01

- 다음글See What Key Replacement Car Tricks The Celebs Are Making Use Of 25.02.01

댓글목록

등록된 댓글이 없습니다.