How To show Your Deepseek From Zero To Hero

페이지 정보

본문

DeepSeek has only actually gotten into mainstream discourse previously few months, so I expect extra analysis to go in the direction of replicating, validating and enhancing MLA. Parameter count often (but not all the time) correlates with ability; fashions with more parameters are inclined to outperform fashions with fewer parameters. However, with 22B parameters and a non-production license, it requires quite a bit of VRAM and might solely be used for research and testing purposes, so it won't be one of the best match for daily native utilization. Last Updated 01 Dec, 2023 min learn In a recent development, the DeepSeek LLM has emerged as a formidable force in the realm of language models, boasting a powerful 67 billion parameters. Where can we find giant language models? Large Language Models are undoubtedly the largest part of the current AI wave and is presently the realm where most research and investment is going in direction of. There’s not leaving OpenAI and saying, "I’m going to start a company and dethrone them." It’s form of crazy. We tried. We had some ideas that we wished individuals to leave those companies and begin and it’s really exhausting to get them out of it.

DeepSeek has only actually gotten into mainstream discourse previously few months, so I expect extra analysis to go in the direction of replicating, validating and enhancing MLA. Parameter count often (but not all the time) correlates with ability; fashions with more parameters are inclined to outperform fashions with fewer parameters. However, with 22B parameters and a non-production license, it requires quite a bit of VRAM and might solely be used for research and testing purposes, so it won't be one of the best match for daily native utilization. Last Updated 01 Dec, 2023 min learn In a recent development, the DeepSeek LLM has emerged as a formidable force in the realm of language models, boasting a powerful 67 billion parameters. Where can we find giant language models? Large Language Models are undoubtedly the largest part of the current AI wave and is presently the realm where most research and investment is going in direction of. There’s not leaving OpenAI and saying, "I’m going to start a company and dethrone them." It’s form of crazy. We tried. We had some ideas that we wished individuals to leave those companies and begin and it’s really exhausting to get them out of it.

You see a company - people leaving to begin those kinds of companies - however exterior of that it’s laborious to persuade founders to leave. It’s not a product. Things like that. That's not likely within the OpenAI DNA up to now in product. Systems like AutoRT inform us that in the future we’ll not only use generative fashions to directly management things, but additionally to generate information for the issues they can't yet management. I take advantage of this analogy of synchronous versus asynchronous AI. You utilize their chat completion API. Assuming you've got a chat model set up already (e.g. Codestral, Llama 3), you can keep this complete expertise local because of embeddings with Ollama and LanceDB. This model demonstrates how LLMs have improved for programming tasks. The mannequin was pretrained on "a various and high-high quality corpus comprising 8.1 trillion tokens" (and as is widespread today, no different data about the dataset is on the market.) "We conduct all experiments on a cluster equipped with NVIDIA H800 GPUs. DeepSeek has created an algorithm that allows an LLM to bootstrap itself by starting with a small dataset of labeled theorem proofs and create more and more greater quality instance to superb-tune itself. But when the space of potential proofs is significantly large, the models are still sluggish.

You see a company - people leaving to begin those kinds of companies - however exterior of that it’s laborious to persuade founders to leave. It’s not a product. Things like that. That's not likely within the OpenAI DNA up to now in product. Systems like AutoRT inform us that in the future we’ll not only use generative fashions to directly management things, but additionally to generate information for the issues they can't yet management. I take advantage of this analogy of synchronous versus asynchronous AI. You utilize their chat completion API. Assuming you've got a chat model set up already (e.g. Codestral, Llama 3), you can keep this complete expertise local because of embeddings with Ollama and LanceDB. This model demonstrates how LLMs have improved for programming tasks. The mannequin was pretrained on "a various and high-high quality corpus comprising 8.1 trillion tokens" (and as is widespread today, no different data about the dataset is on the market.) "We conduct all experiments on a cluster equipped with NVIDIA H800 GPUs. DeepSeek has created an algorithm that allows an LLM to bootstrap itself by starting with a small dataset of labeled theorem proofs and create more and more greater quality instance to superb-tune itself. But when the space of potential proofs is significantly large, the models are still sluggish.

Tesla still has a primary mover advantage for sure. But anyway, the parable that there's a first mover benefit is well understood. That was a massive first quarter. All this could run totally by yourself laptop or have Ollama deployed on a server to remotely energy code completion and chat experiences based mostly in your wants. When combined with the code that you just finally commit, it can be utilized to enhance the LLM that you just or your staff use (should you enable). This part of the code handles potential errors from string parsing and factorial computation gracefully. They minimized the communication latency by overlapping extensively computation and communication, akin to dedicating 20 streaming multiprocessors out of 132 per H800 for less than inter-GPU communication. At an economical value of only 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the at the moment strongest open-source base mannequin. The safety data covers "various delicate topics" (and since it is a Chinese company, some of that can be aligning the model with the preferences of the CCP/Xi Jingping - don’t ask about Tiananmen!). The Sapiens models are good due to scale - particularly, heaps of knowledge and many annotations.

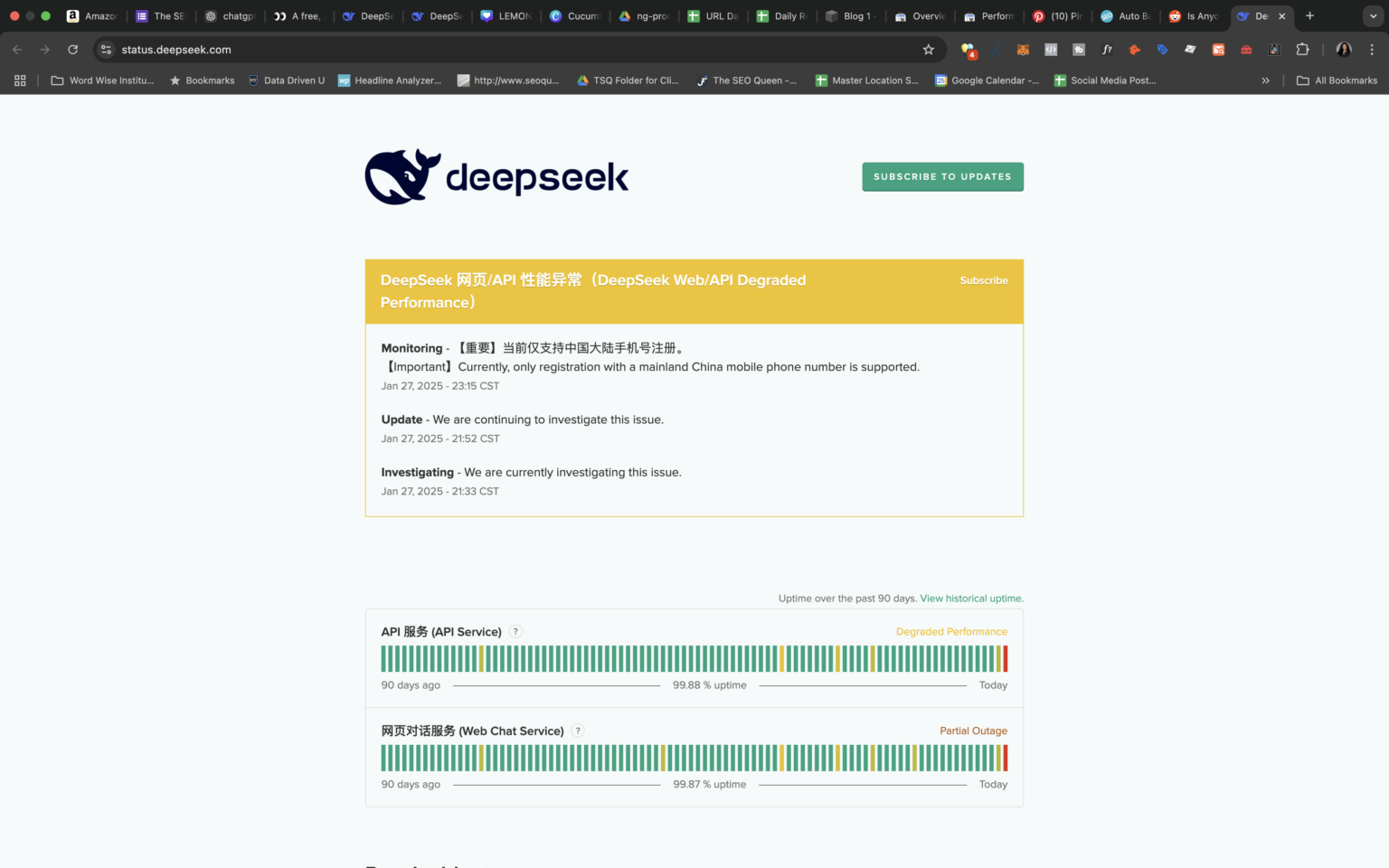

We’ve heard plenty of tales - most likely personally as well as reported in the information - in regards to the challenges DeepMind has had in changing modes from "we’re simply researching and doing stuff we think is cool" to Sundar saying, "Come on, I’m below the gun here. While we have now seen makes an attempt to introduce new architectures resembling Mamba and more not too long ago xLSTM to just title just a few, it appears seemingly that the decoder-only transformer is right here to remain - no less than for essentially the most half. Usage details are available here. If layers are offloaded to the GPU, this can reduce RAM utilization and use VRAM as an alternative. That is, they can use it to enhance their own basis mannequin too much faster than anyone else can do it. The deepseek-chat mannequin has been upgraded to DeepSeek-V3. deepseek ai-V3 achieves a major breakthrough in inference pace over earlier models. DeepSeek-V3 makes use of significantly fewer resources in comparison with its peers; for example, whereas the world's leading A.I.

In the event you loved this post and you would like to receive details concerning Deep Seek generously visit our site.

- 이전글تفسير البحر المحيط أبي حيان الغرناطي/سورة هود 25.02.01

- 다음글좋은 인간관계: 커뮤니케이션과 이해 25.02.01

댓글목록

등록된 댓글이 없습니다.