Deepseek Promotion one hundred and one

페이지 정보

본문

It’s known as DeepSeek R1, and it’s rattling nerves on Wall Street. He’d let the automobile publicize his location and so there have been folks on the road taking a look at him as he drove by. These giant language fashions need to load fully into RAM or Deepseek (photoclub.canadiangeographic.ca) VRAM every time they generate a new token (piece of text). For comparison, excessive-end GPUs like the Nvidia RTX 3090 boast practically 930 GBps of bandwidth for his or her VRAM. GPTQ models benefit from GPUs just like the RTX 3080 20GB, A4500, A5000, and the likes, demanding roughly 20GB of VRAM. Having CPU instruction sets like AVX, AVX2, AVX-512 can additional improve efficiency if available. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and units a multi-token prediction coaching objective for stronger performance. Trained on 14.Eight trillion diverse tokens and incorporating superior methods like Multi-Token Prediction, DeepSeek v3 sets new requirements in AI language modeling. On this state of affairs, you possibly can expect to generate roughly 9 tokens per second. Send a check message like "hi" and check if you can get response from the Ollama server.

It’s known as DeepSeek R1, and it’s rattling nerves on Wall Street. He’d let the automobile publicize his location and so there have been folks on the road taking a look at him as he drove by. These giant language fashions need to load fully into RAM or Deepseek (photoclub.canadiangeographic.ca) VRAM every time they generate a new token (piece of text). For comparison, excessive-end GPUs like the Nvidia RTX 3090 boast practically 930 GBps of bandwidth for his or her VRAM. GPTQ models benefit from GPUs just like the RTX 3080 20GB, A4500, A5000, and the likes, demanding roughly 20GB of VRAM. Having CPU instruction sets like AVX, AVX2, AVX-512 can additional improve efficiency if available. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and units a multi-token prediction coaching objective for stronger performance. Trained on 14.Eight trillion diverse tokens and incorporating superior methods like Multi-Token Prediction, DeepSeek v3 sets new requirements in AI language modeling. On this state of affairs, you possibly can expect to generate roughly 9 tokens per second. Send a check message like "hi" and check if you can get response from the Ollama server.

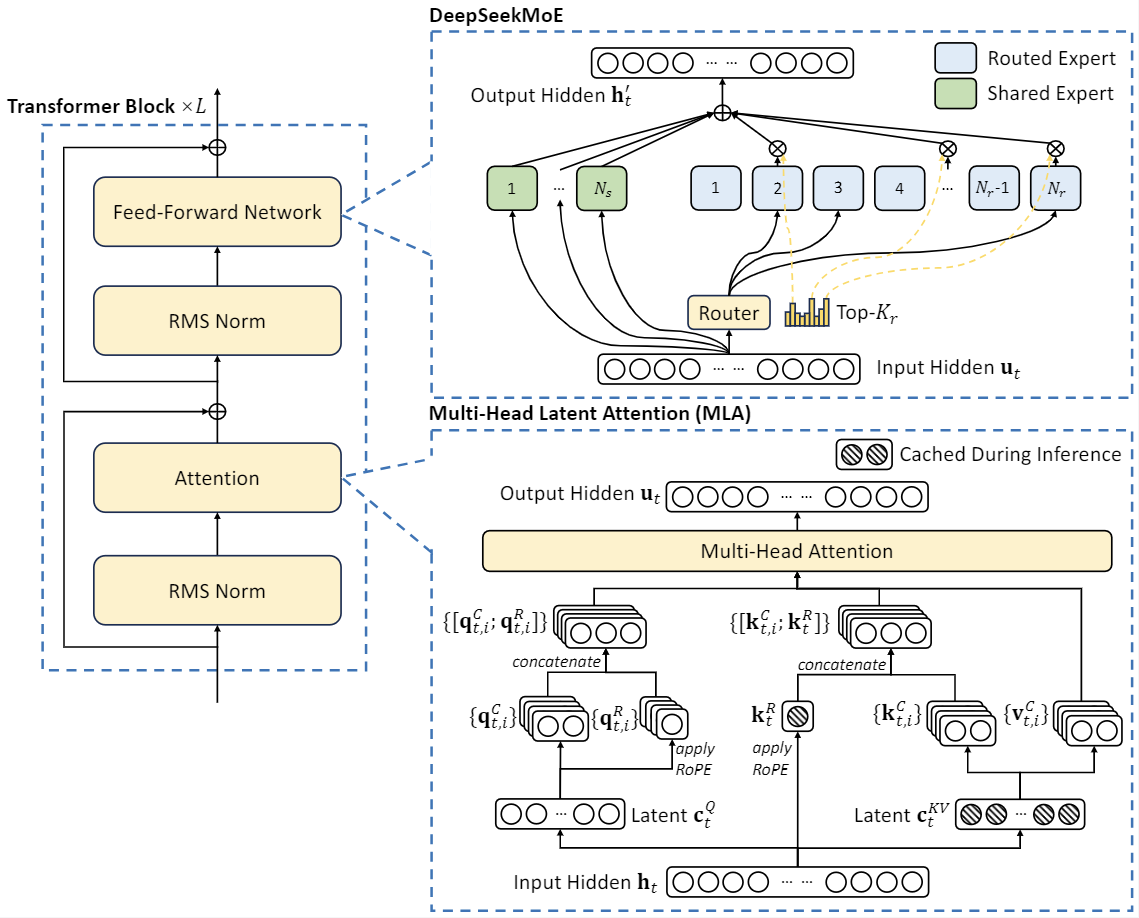

If you don't have Ollama installed, check the earlier weblog. You can use that menu to chat with the Ollama server with out needing an online UI. You can launch a server and question it utilizing the OpenAI-appropriate imaginative and prescient API, which supports interleaved textual content, multi-picture, and video formats. Explore all variations of the model, their file formats like GGML, GPTQ, and HF, and perceive the hardware necessities for native inference. If you're venturing into the realm of larger fashions the hardware requirements shift noticeably. The efficiency of an Deepseek model relies upon closely on the hardware it is running on. Note: Unlike copilot, we’ll focus on domestically operating LLM’s. Multi-Head Latent Attention (MLA): In a Transformer, attention mechanisms assist the model give attention to essentially the most related components of the input. In case your system doesn't have fairly enough RAM to fully load the mannequin at startup, you may create a swap file to help with the loading. RAM wanted to load the model initially. Suppose your have Ryzen 5 5600X processor and DDR4-3200 RAM with theoretical max bandwidth of fifty GBps. An Intel Core i7 from 8th gen onward or AMD Ryzen 5 from third gen onward will work effectively. The GTX 1660 or 2060, AMD 5700 XT, or RTX 3050 or 3060 would all work nicely.

For Best Performance: Opt for a machine with a high-finish GPU (like NVIDIA's newest RTX 3090 or RTX 4090) or dual GPU setup to accommodate the largest models (65B and 70B). A system with enough RAM (minimal sixteen GB, but sixty four GB best) could be optimum. For recommendations on the perfect computer hardware configurations to handle Deepseek fashions easily, take a look at this guide: Best Computer for Running LLaMA and LLama-2 Models. But, if an concept is effective, it’ll discover its approach out just because everyone’s going to be speaking about it in that actually small community. Emotional textures that humans find fairly perplexing. Within the fashions checklist, add the fashions that installed on the Ollama server you want to use within the VSCode. Open the directory with the VSCode. Without specifying a selected context, it’s important to notice that the precept holds true in most open societies but doesn't universally hold throughout all governments worldwide. It’s considerably more efficient than different models in its class, gets nice scores, and the research paper has a bunch of particulars that tells us that DeepSeek has constructed a workforce that deeply understands the infrastructure required to prepare bold fashions.

If you happen to look closer at the results, it’s worth noting these numbers are closely skewed by the easier environments (BabyAI and Crafter). This model marks a considerable leap in bridging the realms of AI and excessive-definition visible content material, providing unprecedented alternatives for professionals in fields where visible detail and accuracy are paramount. For instance, a system with DDR5-5600 providing around 90 GBps might be sufficient. This means the system can better understand, generate, and edit code compared to earlier approaches. But perhaps most significantly, buried in the paper is a crucial insight: you possibly can convert just about any LLM right into a reasoning mannequin should you finetune them on the proper combine of information - here, 800k samples showing questions and answers the chains of thought written by the mannequin whereas answering them. Flexing on how a lot compute you've gotten access to is frequent apply among AI companies. After weeks of focused monitoring, we uncovered a way more significant risk: a notorious gang had begun purchasing and sporting the company’s uniquely identifiable apparel and utilizing it as an emblem of gang affiliation, posing a big risk to the company’s image by means of this unfavourable association.

- 이전글11 Ways To Totally Defy Your Blown Window Repair Near Me 25.02.01

- 다음글9 . What Your Parents Teach You About ADHD Assessment Uk Adults 25.02.01

댓글목록

등록된 댓글이 없습니다.