Nine Things You May Learn From Buddhist Monks About Deepseek

페이지 정보

본문

To ensure unbiased and thorough performance assessments, DeepSeek AI designed new downside units, such because the Hungarian National High-School Exam and Google’s instruction following the evaluation dataset. The evaluation results display that the distilled smaller dense fashions carry out exceptionally properly on benchmarks. They’ve acquired the intuitions about scaling up models. Its latest version was released on 20 January, quickly impressing AI consultants earlier than it got the attention of the complete tech trade - and the world. Its V3 model raised some consciousness about the company, though its content material restrictions round delicate subjects about the Chinese government and its leadership sparked doubts about its viability as an trade competitor, the Wall Street Journal reported. These programs once more learn from huge swathes of knowledge, together with on-line textual content and images, to be able to make new content. AI can, at instances, make a computer seem like a person. By 27 January 2025 the app had surpassed ChatGPT as the best-rated free app on the iOS App Store within the United States; its chatbot reportedly answers questions, solves logic problems and writes laptop programs on par with other chatbots on the market, in line with benchmark exams used by American A.I. Milmo, Dan; Hawkins, Amy; Booth, Robert; Kollewe, Julia (28 January 2025). "'Sputnik second': $1tn wiped off US stocks after Chinese agency unveils AI chatbot" - by way of The Guardian.

To ensure unbiased and thorough performance assessments, DeepSeek AI designed new downside units, such because the Hungarian National High-School Exam and Google’s instruction following the evaluation dataset. The evaluation results display that the distilled smaller dense fashions carry out exceptionally properly on benchmarks. They’ve acquired the intuitions about scaling up models. Its latest version was released on 20 January, quickly impressing AI consultants earlier than it got the attention of the complete tech trade - and the world. Its V3 model raised some consciousness about the company, though its content material restrictions round delicate subjects about the Chinese government and its leadership sparked doubts about its viability as an trade competitor, the Wall Street Journal reported. These programs once more learn from huge swathes of knowledge, together with on-line textual content and images, to be able to make new content. AI can, at instances, make a computer seem like a person. By 27 January 2025 the app had surpassed ChatGPT as the best-rated free app on the iOS App Store within the United States; its chatbot reportedly answers questions, solves logic problems and writes laptop programs on par with other chatbots on the market, in line with benchmark exams used by American A.I. Milmo, Dan; Hawkins, Amy; Booth, Robert; Kollewe, Julia (28 January 2025). "'Sputnik second': $1tn wiped off US stocks after Chinese agency unveils AI chatbot" - by way of The Guardian.

The pipeline incorporates two RL stages geared toward discovering improved reasoning patterns and aligning with human preferences, in addition to two SFT stages that serve as the seed for the model's reasoning and non-reasoning capabilities. To handle these points and additional enhance reasoning efficiency, we introduce DeepSeek-R1, which contains chilly-start data earlier than RL. The open supply DeepSeek-R1, as well as its API, will profit the research neighborhood to distill better smaller models sooner or later. Notably, it is the primary open analysis to validate that reasoning capabilities of LLMs could be incentivized purely by means of RL, without the need for SFT. But now that DeepSeek-R1 is out and obtainable, together with as an open weight release, all these forms of management have become moot. DeepSeek-R1-Distill-Qwen-1.5B, DeepSeek-R1-Distill-Qwen-7B, DeepSeek-R1-Distill-Qwen-14B and DeepSeek-R1-Distill-Qwen-32B are derived from Qwen-2.5 series, which are originally licensed beneath Apache 2.0 License, and now finetuned with 800k samples curated with DeepSeek-R1. Nevertheless it sure makes me wonder simply how a lot cash Vercel has been pumping into the React staff, what number of members of that team it stole and how that affected the React docs and the team itself, both directly or by means of "my colleague used to work here and now could be at Vercel they usually keep telling me Next is great".

The pipeline incorporates two RL stages geared toward discovering improved reasoning patterns and aligning with human preferences, in addition to two SFT stages that serve as the seed for the model's reasoning and non-reasoning capabilities. To handle these points and additional enhance reasoning efficiency, we introduce DeepSeek-R1, which contains chilly-start data earlier than RL. The open supply DeepSeek-R1, as well as its API, will profit the research neighborhood to distill better smaller models sooner or later. Notably, it is the primary open analysis to validate that reasoning capabilities of LLMs could be incentivized purely by means of RL, without the need for SFT. But now that DeepSeek-R1 is out and obtainable, together with as an open weight release, all these forms of management have become moot. DeepSeek-R1-Distill-Qwen-1.5B, DeepSeek-R1-Distill-Qwen-7B, DeepSeek-R1-Distill-Qwen-14B and DeepSeek-R1-Distill-Qwen-32B are derived from Qwen-2.5 series, which are originally licensed beneath Apache 2.0 License, and now finetuned with 800k samples curated with DeepSeek-R1. Nevertheless it sure makes me wonder simply how a lot cash Vercel has been pumping into the React staff, what number of members of that team it stole and how that affected the React docs and the team itself, both directly or by means of "my colleague used to work here and now could be at Vercel they usually keep telling me Next is great".

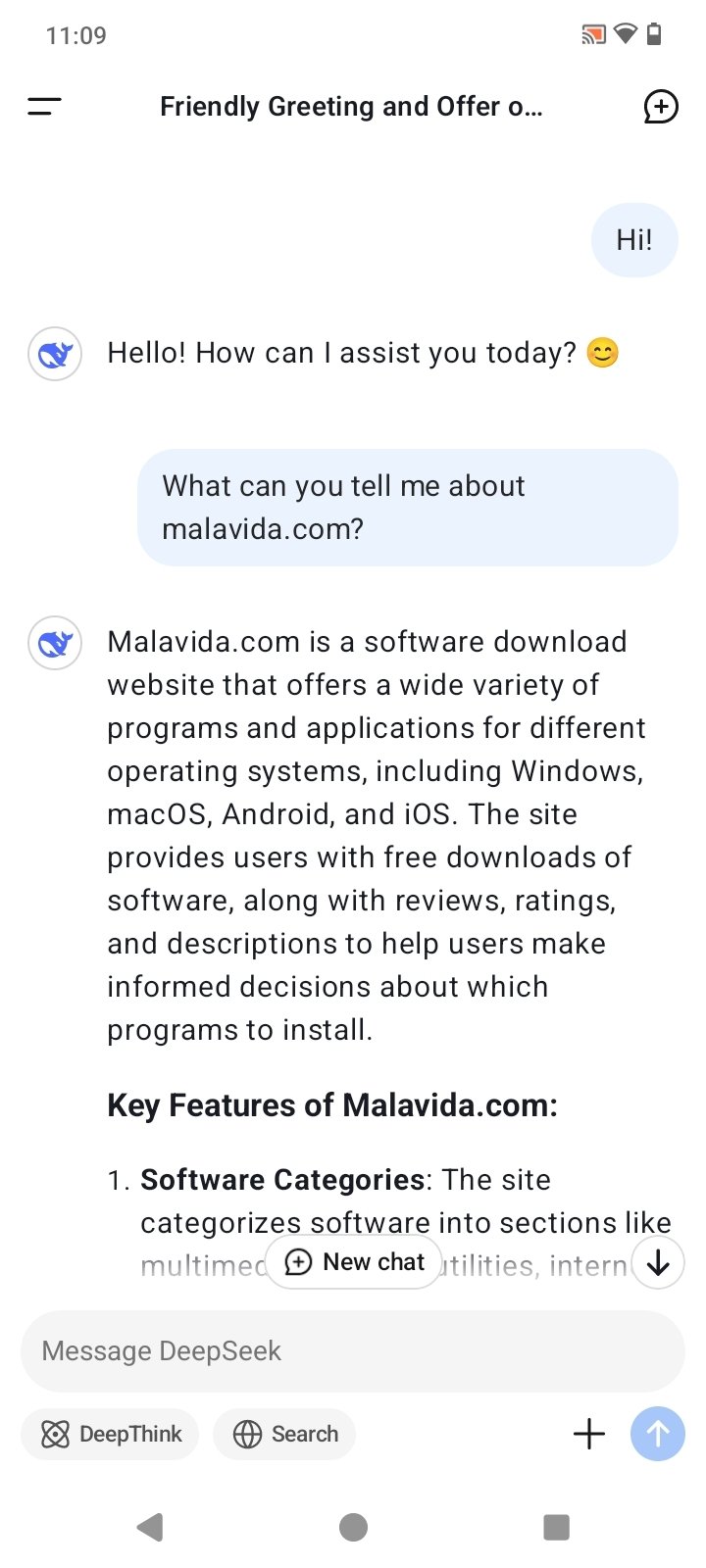

DeepSeek is the identify of a free deepseek AI-powered chatbot, which appears to be like, feels and works very very like ChatGPT. Millions of people use instruments akin to ChatGPT to assist them with everyday duties like writing emails, summarising textual content, and answering questions - and others even use them to help with primary coding and studying. The implementation illustrated the use of sample matching and recursive calls to generate Fibonacci numbers, with fundamental error-checking. Be careful with DeepSeek, Australia says - so is it protected to use? Please use our setting to run these fashions. DeepSeek-R1-Distill models could be utilized in the identical manner as Qwen or Llama fashions. Chinese companies developing the same applied sciences. You need to perceive that Tesla is in a better position than the Chinese to take benefit of latest techniques like these utilized by DeepSeek. What makes DeepSeek so special is the company's claim that it was built at a fraction of the price of business-leading fashions like OpenAI - as a result of it uses fewer advanced chips. Read the analysis paper: AUTORT: ديب سيك EMBODIED Foundation Models For big SCALE ORCHESTRATION OF ROBOTIC Agents (GitHub, PDF).

Cerebras FLOR-6.3B, Allen AI OLMo 7B, Google TimesFM 200M, AI Singapore Sea-Lion 7.5B, ChatDB Natural-SQL-7B, Brain GOODY-2, Alibaba Qwen-1.5 72B, Google DeepMind Gemini 1.5 Pro MoE, Google DeepMind Gemma 7B, Reka AI Reka Flash 21B, Reka AI Reka Edge 7B, Apple Ask 20B, Reliance Hanooman 40B, Mistral AI Mistral Large 540B, Mistral AI Mistral Small 7B, ByteDance 175B, ByteDance 530B, HF/ServiceNow StarCoder 2 15B, HF Cosmo-1B, SambaNova Samba-1 1.4T CoE. We display that the reasoning patterns of bigger models might be distilled into smaller models, leading to higher performance compared to the reasoning patterns found by RL on small fashions. This strategy permits the model to explore chain-of-thought (CoT) for fixing advanced issues, resulting in the development of DeepSeek-R1-Zero. A machine makes use of the know-how to be taught and remedy issues, sometimes by being educated on huge amounts of information and recognising patterns. Reinforcement studying is a kind of machine studying where an agent learns by interacting with an atmosphere and receiving suggestions on its actions.

- 이전글Are you experiencing issues with your car's ECU, PCM, or ECM? 25.02.02

- 다음글القانون في الطب - الكتاب الثالث - الجزء الثاني 25.02.02

댓글목록

등록된 댓글이 없습니다.