9 Warning Signs Of Your Deepseek Demise

페이지 정보

본문

Initially, DeepSeek created their first model with architecture similar to other open models like LLaMA, aiming to outperform benchmarks. In all of those, DeepSeek V3 feels very capable, but how it presents its info doesn’t feel exactly consistent with my expectations from something like Claude or ChatGPT. Hence, after k consideration layers, information can move forward by up to k × W tokens SWA exploits the stacked layers of a transformer to attend information beyond the window dimension W . All content material containing personal data or subject to copyright restrictions has been removed from our dataset. DeepSeek-Coder and DeepSeek-Math were used to generate 20K code-associated and 30K math-related instruction information, then combined with an instruction dataset of 300M tokens. This model was positive-tuned by Nous Research, with Teknium and Emozilla leading the effective tuning process and dataset curation, Redmond AI sponsoring the compute, and several other contributors. Dataset Pruning: Our system employs heuristic guidelines and fashions to refine our coaching knowledge.

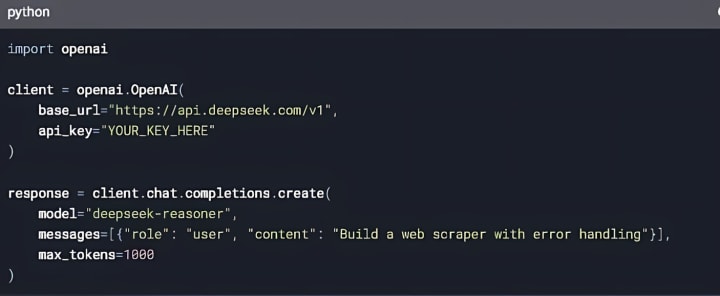

Whether you're an information scientist, enterprise chief, or tech enthusiast, DeepSeek R1 is your ultimate instrument to unlock the true potential of your knowledge. Enjoy experimenting with DeepSeek-R1 and exploring the potential of native AI fashions. By following this information, you have efficiently arrange DeepSeek-R1 in your native machine using Ollama. Let's dive into how you will get this mannequin working on your native system. You may also follow me through my Youtube channel. If talking about weights, weights you'll be able to publish instantly. I’d say this save me atleast 10-quarter-hour of time googling for the api documentation and fumbling till I acquired it right. Depending in your web speed, this may take a while. This setup offers a strong answer for AI integration, offering privateness, velocity, and control over your functions. BTW, having a strong database on your AI/ML functions is a should. We will likely be using SingleStore as a vector database right here to store our knowledge. I recommend using an all-in-one data platform like SingleStore.

I built a serverless utility utilizing Cloudflare Workers and Hono, a lightweight web framework for Cloudflare Workers. Below is an entire step-by-step video of using DeepSeek-R1 for different use cases. Or you completely really feel like Jayant, who feels constrained to use AI? From the outset, it was free deepseek for business use and absolutely open-supply. Consequently, we made the decision to not incorporate MC information in the pre-coaching or effective-tuning process, as it could lead to overfitting on benchmarks. Say whats up to DeepSeek R1-the AI-powered platform that’s altering the principles of knowledge analytics! So that’s one other angle. We assessed DeepSeek-V2.5 utilizing industry-standard test sets. 4. RL utilizing GRPO in two levels. As you possibly can see when you go to Llama website, you can run the totally different parameters of DeepSeek-R1. As you may see once you go to Ollama website, you possibly can run the completely different parameters of DeepSeek-R1. You'll be able to run 1.5b, 7b, 8b, 14b, 32b, 70b, 671b and clearly the hardware requirements enhance as you choose larger parameter.

I built a serverless utility utilizing Cloudflare Workers and Hono, a lightweight web framework for Cloudflare Workers. Below is an entire step-by-step video of using DeepSeek-R1 for different use cases. Or you completely really feel like Jayant, who feels constrained to use AI? From the outset, it was free deepseek for business use and absolutely open-supply. Consequently, we made the decision to not incorporate MC information in the pre-coaching or effective-tuning process, as it could lead to overfitting on benchmarks. Say whats up to DeepSeek R1-the AI-powered platform that’s altering the principles of knowledge analytics! So that’s one other angle. We assessed DeepSeek-V2.5 utilizing industry-standard test sets. 4. RL utilizing GRPO in two levels. As you possibly can see when you go to Llama website, you can run the totally different parameters of DeepSeek-R1. As you may see once you go to Ollama website, you possibly can run the completely different parameters of DeepSeek-R1. You'll be able to run 1.5b, 7b, 8b, 14b, 32b, 70b, 671b and clearly the hardware requirements enhance as you choose larger parameter.

What is the minimum Requirements of Hardware to run this? With Ollama, you'll be able to simply obtain and run the DeepSeek-R1 mannequin. If you want to extend your learning and construct a simple RAG utility, you possibly can follow this tutorial. While a lot attention within the AI community has been centered on fashions like LLaMA and Mistral, DeepSeek has emerged as a big player that deserves closer examination. And identical to that, you're interacting with DeepSeek-R1 regionally. DeepSeek-R1 stands out for several causes. It is best to see deepseek-r1 in the list of obtainable fashions. This paper presents a new benchmark referred to as CodeUpdateArena to guage how well massive language fashions (LLMs) can replace their knowledge about evolving code APIs, a important limitation of present approaches. This may be notably beneficial for those with pressing medical needs. The ethos of the Hermes collection of fashions is concentrated on aligning LLMs to the user, with powerful steering capabilities and control given to the end user. End of Model enter. This command tells Ollama to download the model.

What is the minimum Requirements of Hardware to run this? With Ollama, you'll be able to simply obtain and run the DeepSeek-R1 mannequin. If you want to extend your learning and construct a simple RAG utility, you possibly can follow this tutorial. While a lot attention within the AI community has been centered on fashions like LLaMA and Mistral, DeepSeek has emerged as a big player that deserves closer examination. And identical to that, you're interacting with DeepSeek-R1 regionally. DeepSeek-R1 stands out for several causes. It is best to see deepseek-r1 in the list of obtainable fashions. This paper presents a new benchmark referred to as CodeUpdateArena to guage how well massive language fashions (LLMs) can replace their knowledge about evolving code APIs, a important limitation of present approaches. This may be notably beneficial for those with pressing medical needs. The ethos of the Hermes collection of fashions is concentrated on aligning LLMs to the user, with powerful steering capabilities and control given to the end user. End of Model enter. This command tells Ollama to download the model.

- 이전글عشري. (2025). كتيب 2025 ASHRAE: الأساسيات 25.02.02

- 다음글I Didn't Know That!: Top 6 Government of the decade 25.02.02

댓글목록

등록된 댓글이 없습니다.