The Hidden Gem Of Deepseek

페이지 정보

본문

Deepseek says it has been in a position to do this cheaply - researchers behind it declare it cost $6m (£4.8m) to prepare, a fraction of the "over $100m" alluded to by OpenAI boss Sam Altman when discussing GPT-4. Notice how 7-9B models come close to or surpass the scores of GPT-3.5 - the King model behind the ChatGPT revolution. The unique GPT-3.5 had 175B params. LLMs around 10B params converge to GPT-3.5 performance, and LLMs round 100B and larger converge to GPT-4 scores. The original GPT-4 was rumored to have round 1.7T params. While GPT-4-Turbo can have as many as 1T params. Can it's another manifestation of convergence? 2024-04-15 Introduction The purpose of this publish is to deep-dive into LLMs that are specialized in code generation duties and see if we can use them to write down code. Probably the most highly effective use case I've for it's to code moderately complex scripts with one-shot prompts and a few nudges. The callbacks have been set, and the occasions are configured to be sent into my backend. Agree. My customers (telco) are asking for smaller models, much more targeted on specific use circumstances, and distributed throughout the community in smaller units Superlarge, expensive and generic fashions usually are not that useful for the enterprise, even for chats.

But after looking by way of the WhatsApp documentation and Indian Tech Videos (sure, all of us did look at the Indian IT Tutorials), it wasn't really a lot of a different from Slack. I very a lot could figure it out myself if needed, but it’s a clear time saver to right away get a correctly formatted CLI invocation. It's now time for the BOT to reply to the message. The mannequin was now speaking in wealthy and detailed phrases about itself and the world and the environments it was being exposed to. Alibaba’s Qwen mannequin is the world’s best open weight code model (Import AI 392) - and so they achieved this via a mixture of algorithmic insights and entry to information (5.5 trillion top quality code/math ones). I hope that additional distillation will happen and we'll get nice and capable models, excellent instruction follower in range 1-8B. So far fashions beneath 8B are method too primary compared to larger ones.

But after looking by way of the WhatsApp documentation and Indian Tech Videos (sure, all of us did look at the Indian IT Tutorials), it wasn't really a lot of a different from Slack. I very a lot could figure it out myself if needed, but it’s a clear time saver to right away get a correctly formatted CLI invocation. It's now time for the BOT to reply to the message. The mannequin was now speaking in wealthy and detailed phrases about itself and the world and the environments it was being exposed to. Alibaba’s Qwen mannequin is the world’s best open weight code model (Import AI 392) - and so they achieved this via a mixture of algorithmic insights and entry to information (5.5 trillion top quality code/math ones). I hope that additional distillation will happen and we'll get nice and capable models, excellent instruction follower in range 1-8B. So far fashions beneath 8B are method too primary compared to larger ones.

Agree on the distillation and optimization of models so smaller ones become capable enough and we don´t have to lay our a fortune (cash and power) on LLMs. The promise and edge of LLMs is the pre-skilled state - no need to collect and label information, spend money and time training own specialised models - just immediate the LLM. My point is that perhaps the strategy to generate income out of this is not LLMs, or not only LLMs, but different creatures created by nice tuning by large firms (or not so big firms essentially). Yet positive tuning has too excessive entry level compared to easy API access and immediate engineering. I don’t subscribe to Claude’s pro tier, so I principally use it throughout the API console or through Simon Willison’s glorious llm CLI tool. Anyone managed to get deepseek ai (visit quicknote.io here >>) API working? Basically, to get the AI systems to be just right for you, you needed to do an enormous amount of thinking. I’m attempting to determine the best incantation to get it to work with Discourse.

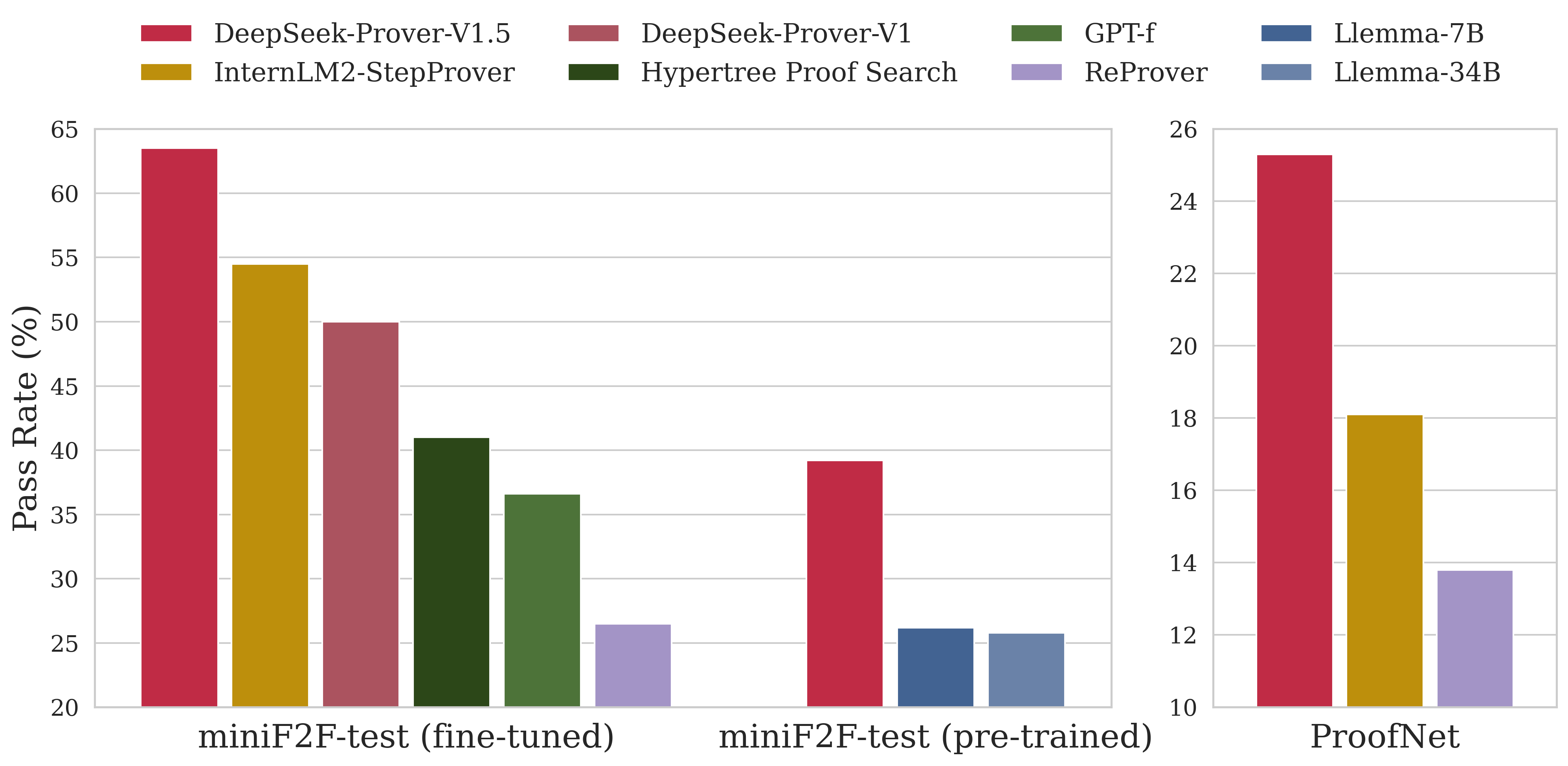

Check out their repository for extra data. The unique mannequin is 4-6 instances costlier yet it is four occasions slower. In other phrases, you're taking a bunch of robots (right here, some relatively easy Google bots with a manipulator arm and eyes and mobility) and provides them access to a large model. Depending in your web velocity, this may take a while. Depending on the complexity of your existing software, discovering the proper plugin and configuration would possibly take a bit of time, and adjusting for errors you might encounter might take a while. This time the movement of old-big-fat-closed models in direction of new-small-slim-open fashions. Models converge to the identical ranges of efficiency judging by their evals. The high-quality-tuning job relied on a uncommon dataset he’d painstakingly gathered over months - a compilation of interviews psychiatrists had accomplished with patients with psychosis, as well as interviews those same psychiatrists had achieved with AI programs. GPT macOS App: A surprisingly good high quality-of-life enchancment over using the online interface. I don’t use any of the screenshotting features of the macOS app yet. Ask for modifications - Add new options or take a look at cases. 5. They use an n-gram filter to get rid of test knowledge from the prepare set.

- 이전글The Marvelous World of Speed Kino: Insights from the Bepick Analysis Community 25.02.02

- 다음글What To Look For To Determine If You're In The Mood For ADHD Test In Adults 25.02.02

댓글목록

등록된 댓글이 없습니다.