DeepSeek-V3 Technical Report

페이지 정보

본문

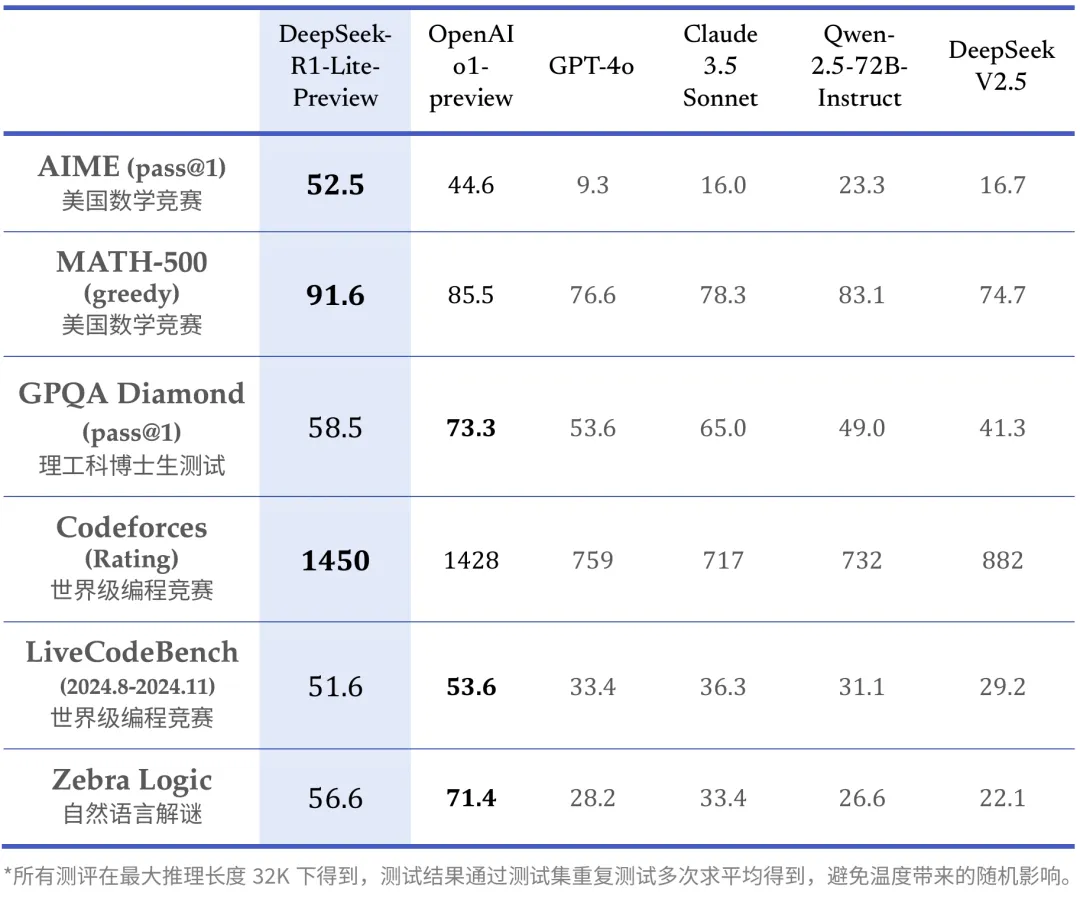

• We introduce an revolutionary methodology to distill reasoning capabilities from the lengthy-Chain-of-Thought (CoT) mannequin, specifically from one of the DeepSeek R1 series fashions, into customary LLMs, particularly DeepSeek-V3. What are some alternatives to DeepSeek LLM? An LLM made to complete coding duties and helping new developers. Code Llama is specialized for code-specific duties and isn’t acceptable as a basis model for different duties. Some fashions struggled to observe by or supplied incomplete code (e.g., Starcoder, CodeLlama). Its efficiency is comparable to main closed-source models like GPT-4o and Claude-Sonnet-3.5, narrowing the gap between open-source and closed-supply fashions on this domain. Like o1, R1 is a "reasoning" mannequin. We display that the reasoning patterns of bigger models will be distilled into smaller models, leading to higher efficiency in comparison with the reasoning patterns found by means of RL on small fashions. "There are 191 easy, 114 medium, and 28 difficult puzzles, with tougher puzzles requiring extra detailed image recognition, more superior reasoning strategies, or each," they write. If we get this proper, everybody will likely be ready to realize extra and train more of their very own agency over their very own mental world.

• We introduce an revolutionary methodology to distill reasoning capabilities from the lengthy-Chain-of-Thought (CoT) mannequin, specifically from one of the DeepSeek R1 series fashions, into customary LLMs, particularly DeepSeek-V3. What are some alternatives to DeepSeek LLM? An LLM made to complete coding duties and helping new developers. Code Llama is specialized for code-specific duties and isn’t acceptable as a basis model for different duties. Some fashions struggled to observe by or supplied incomplete code (e.g., Starcoder, CodeLlama). Its efficiency is comparable to main closed-source models like GPT-4o and Claude-Sonnet-3.5, narrowing the gap between open-source and closed-supply fashions on this domain. Like o1, R1 is a "reasoning" mannequin. We display that the reasoning patterns of bigger models will be distilled into smaller models, leading to higher efficiency in comparison with the reasoning patterns found by means of RL on small fashions. "There are 191 easy, 114 medium, and 28 difficult puzzles, with tougher puzzles requiring extra detailed image recognition, more superior reasoning strategies, or each," they write. If we get this proper, everybody will likely be ready to realize extra and train more of their very own agency over their very own mental world.

On the more challenging FIMO benchmark, DeepSeek-Prover solved 4 out of 148 issues with one hundred samples, while GPT-4 solved none. See the images: The paper has some exceptional, scifi-esque photos of the mines and the drones inside the mine - test it out! He did not know if he was successful or shedding as he was solely capable of see a small a part of the gameboard. This a part of the code handles potential errors from string parsing and factorial computation gracefully. The eye part employs 4-way Tensor Parallelism (TP4) with Sequence Parallelism (SP), mixed with 8-method Data Parallelism (DP8). Finally, the update rule is the parameter replace from PPO that maximizes the reward metrics in the current batch of data (PPO is on-coverage, which implies the parameters are only updated with the current batch of prompt-era pairs). Mistral 7B is a 7.3B parameter open-supply(apache2 license) language model that outperforms much larger fashions like Llama 2 13B and matches many benchmarks of Llama 1 34B. Its key improvements include Grouped-query attention and Sliding Window Attention for efficient processing of long sequences. Others demonstrated simple however clear examples of advanced Rust utilization, like Mistral with its recursive method or Stable Code with parallel processing.

The implementation was designed to support multiple numeric varieties like i32 and u64. Though China is laboring under varied compute export restrictions, papers like this highlight how the nation hosts quite a few talented groups who're capable of non-trivial AI development and invention. For an in depth studying, check with the papers and links I’ve connected. Furthermore, within the prefilling stage, to enhance the throughput and hide the overhead of all-to-all and TP communication, we simultaneously course of two micro-batches with comparable computational workloads, overlapping the eye and MoE of 1 micro-batch with the dispatch and mix of another. To additional push the boundaries of open-source model capabilities, we scale up our models and introduce DeepSeek-V3, a big Mixture-of-Experts (MoE) model with 671B parameters, of which 37B are activated for each token. While it trails behind GPT-4o and Claude-Sonnet-3.5 in English factual information (SimpleQA), it surpasses these models in Chinese factual knowledge (Chinese SimpleQA), highlighting its strength in Chinese factual data. 2) For factuality benchmarks, DeepSeek-V3 demonstrates superior performance among open-supply models on both SimpleQA and Chinese SimpleQA.

The implementation was designed to support multiple numeric varieties like i32 and u64. Though China is laboring under varied compute export restrictions, papers like this highlight how the nation hosts quite a few talented groups who're capable of non-trivial AI development and invention. For an in depth studying, check with the papers and links I’ve connected. Furthermore, within the prefilling stage, to enhance the throughput and hide the overhead of all-to-all and TP communication, we simultaneously course of two micro-batches with comparable computational workloads, overlapping the eye and MoE of 1 micro-batch with the dispatch and mix of another. To additional push the boundaries of open-source model capabilities, we scale up our models and introduce DeepSeek-V3, a big Mixture-of-Experts (MoE) model with 671B parameters, of which 37B are activated for each token. While it trails behind GPT-4o and Claude-Sonnet-3.5 in English factual information (SimpleQA), it surpasses these models in Chinese factual knowledge (Chinese SimpleQA), highlighting its strength in Chinese factual data. 2) For factuality benchmarks, DeepSeek-V3 demonstrates superior performance among open-supply models on both SimpleQA and Chinese SimpleQA.

Large language models (LLM) have proven impressive capabilities in mathematical reasoning, however their utility in formal theorem proving has been restricted by the lack of coaching knowledge. We adopt the BF16 data format as an alternative of FP32 to track the first and second moments in the AdamW (Loshchilov and Hutter, 2017) optimizer, with out incurring observable performance degradation. • On top of the environment friendly architecture of DeepSeek-V2, we pioneer an auxiliary-loss-free technique for load balancing, which minimizes the efficiency degradation that arises from encouraging load balancing. The essential architecture of DeepSeek-V3 is still within the Transformer (Vaswani et al., 2017) framework. Therefore, by way of structure, DeepSeek-V3 still adopts Multi-head Latent Attention (MLA) (DeepSeek-AI, 2024c) for environment friendly inference and DeepSeekMoE (Dai et al., 2024) for value-efficient coaching. For engineering-related duties, while DeepSeek-V3 performs slightly beneath Claude-Sonnet-3.5, it nonetheless outpaces all different models by a major margin, demonstrating its competitiveness across diverse technical benchmarks. In addition, we perform language-modeling-based evaluation for Pile-test and use Bits-Per-Byte (BPB) because the metric to guarantee fair comparability among models using completely different tokenizers.

In case you have virtually any concerns regarding where along with how to utilize ديب سيك, you can contact us at our internet site.

- 이전글لسان العرب : طاء - 25.02.03

- 다음글Casino bei Spinfest Lerne kennen die attraktivsten Bonusangebote, zuverlässigen Zahlungswege und eine vielfältige Spielaustattung um ein aufregendes Online-Erlebnis. 25.02.03

댓글목록

등록된 댓글이 없습니다.