Deepseek And The Art Of Time Management

페이지 정보

본문

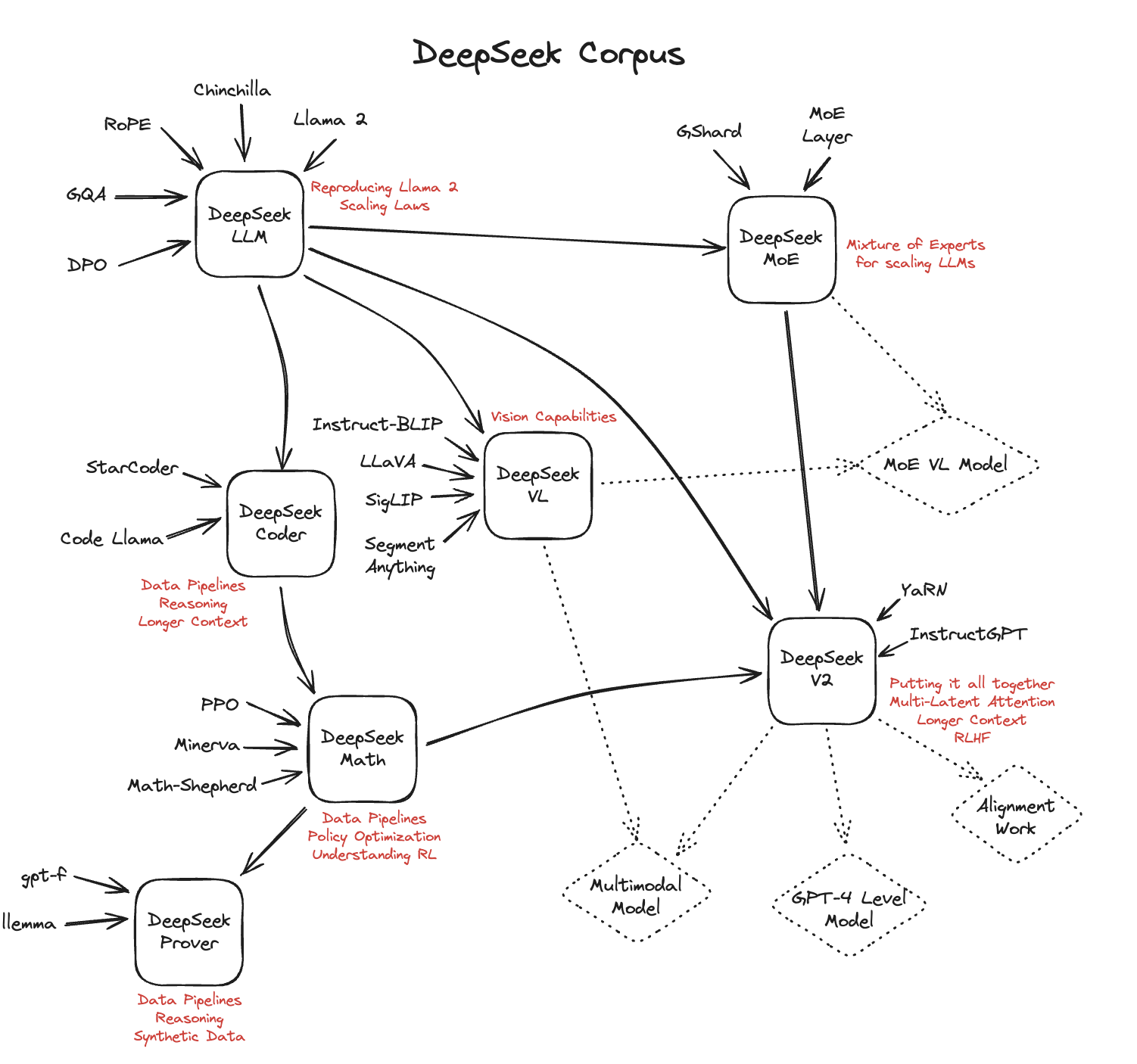

DeepSeek distinguishes itself with its robust and versatile options, catering to quite a lot of user needs. Despite that, DeepSeek V3 achieved benchmark scores that matched or beat OpenAI’s GPT-4o and Anthropic’s Claude 3.5 Sonnet. He cautions that DeepSeek’s fashions don’t beat leading closed reasoning models, like OpenAI’s o1, which could also be preferable for probably the most difficult tasks. Proponents of open AI models, nonetheless, have met DeepSeek’s releases with enthusiasm. Better nonetheless, DeepSeek provides a number of smaller, more efficient versions of its fundamental fashions, known as "distilled fashions." These have fewer parameters, making them easier to run on much less highly effective devices. Most "open" models present solely the model weights essential to run or fantastic-tune the model. "DeepSeek-V3 and R1 legitimately come near matching closed fashions. The researchers have also explored the potential of DeepSeek-Coder-V2 to push the boundaries of mathematical reasoning and code generation for large language fashions, as evidenced by the associated papers DeepSeekMath: Pushing the boundaries of Mathematical Reasoning in Open Language and AutoCoder: Enhancing Code with Large Language Models. Secondly, DeepSeek-V3 employs a multi-token prediction training objective, which we now have noticed to enhance the general efficiency on evaluation benchmarks.

DeepSeek distinguishes itself with its robust and versatile options, catering to quite a lot of user needs. Despite that, DeepSeek V3 achieved benchmark scores that matched or beat OpenAI’s GPT-4o and Anthropic’s Claude 3.5 Sonnet. He cautions that DeepSeek’s fashions don’t beat leading closed reasoning models, like OpenAI’s o1, which could also be preferable for probably the most difficult tasks. Proponents of open AI models, nonetheless, have met DeepSeek’s releases with enthusiasm. Better nonetheless, DeepSeek provides a number of smaller, more efficient versions of its fundamental fashions, known as "distilled fashions." These have fewer parameters, making them easier to run on much less highly effective devices. Most "open" models present solely the model weights essential to run or fantastic-tune the model. "DeepSeek-V3 and R1 legitimately come near matching closed fashions. The researchers have also explored the potential of DeepSeek-Coder-V2 to push the boundaries of mathematical reasoning and code generation for large language fashions, as evidenced by the associated papers DeepSeekMath: Pushing the boundaries of Mathematical Reasoning in Open Language and AutoCoder: Enhancing Code with Large Language Models. Secondly, DeepSeek-V3 employs a multi-token prediction training objective, which we now have noticed to enhance the general efficiency on evaluation benchmarks.

Through the dynamic adjustment, DeepSeek-V3 retains balanced expert load throughout training, and achieves higher efficiency than models that encourage load balance by way of pure auxiliary losses. Because each skilled is smaller and extra specialised, less reminiscence is required to practice the model, and compute prices are lower as soon as the mannequin is deployed. As we funnel right down to lower dimensions, we’re essentially performing a discovered type of dimensionality discount that preserves essentially the most promising reasoning pathways whereas discarding irrelevant instructions. It's stated to perform as well as, or even better than, prime Western AI models in sure tasks like math, coding, and reasoning, however at a much decrease value to develop. Unlike other AI fashions that price billions to train, DeepSeek claims they built R1 for a lot much less, which has shocked the tech world as a result of it reveals you may not need huge quantities of cash to make superior AI. Its launch has brought about an enormous stir in the tech markets, resulting in a drop in inventory prices.

Through the dynamic adjustment, DeepSeek-V3 retains balanced expert load throughout training, and achieves higher efficiency than models that encourage load balance by way of pure auxiliary losses. Because each skilled is smaller and extra specialised, less reminiscence is required to practice the model, and compute prices are lower as soon as the mannequin is deployed. As we funnel right down to lower dimensions, we’re essentially performing a discovered type of dimensionality discount that preserves essentially the most promising reasoning pathways whereas discarding irrelevant instructions. It's stated to perform as well as, or even better than, prime Western AI models in sure tasks like math, coding, and reasoning, however at a much decrease value to develop. Unlike other AI fashions that price billions to train, DeepSeek claims they built R1 for a lot much less, which has shocked the tech world as a result of it reveals you may not need huge quantities of cash to make superior AI. Its launch has brought about an enormous stir in the tech markets, resulting in a drop in inventory prices.

Although this tremendous drop reportedly erased $21 billion from CEO Jensen Huang's personal wealth, it nevertheless only returns NVIDIA stock to October 2024 levels, an indication of simply how meteoric the rise of AI investments has been. The result's DeepSeek-V3, a big language model with 671 billion parameters. The R1 model, launched in early 2025, stands out for its spectacular reasoning capabilities, excelling in tasks like arithmetic, coding, and natural language processing. This affordability, combined with its sturdy capabilities, makes it an excellent choice for companies and builders in search of highly effective AI options. Amazon SageMaker JumpStart is a machine learning (ML) hub with FMs, constructed-in algorithms, and prebuilt ML solutions which you can deploy with only a few clicks. This Chinese AI startup founded by Liang Wenfeng, has quickly risen as a notable challenger within the aggressive AI landscape because it has captured international attention by offering cutting-edge, cost-efficient AI solutions. Despite being developed on less advanced hardware, it matches the performance of high-finish models, offering an open-source possibility below the MIT license. The mixture of experts, being much like the gaussian mixture mannequin, can also be trained by the expectation-maximization algorithm, identical to gaussian mixture models. It hasn’t yet confirmed it might probably handle a few of the massively formidable AI capabilities for industries that - for now - still require super infrastructure investments.

deepseek ai-R1 employs large-scale reinforcement studying during put up-training to refine its reasoning capabilities. The training regimen employed giant batch sizes and a multi-step learning rate schedule, guaranteeing robust and efficient studying capabilities. Zero: Memory optimizations toward coaching trillion parameter fashions. You’ve seemingly heard of DeepSeek: The Chinese firm released a pair of open giant language models (LLMs), DeepSeek-V3 and DeepSeek-R1, in December 2024, making them accessible to anybody totally free use and modification. Whether you are working on natural language processing, coding, or complicated mathematical issues, DeepSeek-V3 supplies high-tier efficiency, as evidenced by its main benchmarks in various metrics. The ban is meant to cease Chinese companies from training high-tier LLMs. In a significant departure from proprietary AI improvement norms, DeepSeek has publicly shared R1's training frameworks and evaluation standards. Unlike many huge players in the sector, DeepSeek has centered on creating efficient, open-source AI fashions that promise high efficiency with out sky-high development costs. "The earlier Llama models were nice open fashions, however they’re not fit for complex issues. In a latest submit on the social network X by Maziyar Panahi, Principal AI/ML/Data Engineer at CNRS, the mannequin was praised as "the world’s greatest open-source LLM" in accordance with the DeepSeek team’s published benchmarks.

If you are you looking for more information regarding deep seek check out our own web site.

- 이전글You'll Never Guess This Bariatric Travel Wheelchair's Secrets 25.02.03

- 다음글The 10 Most Scariest Things About Bifold Door Seal Repair 25.02.03

댓글목록

등록된 댓글이 없습니다.