What Are Deepseek?

페이지 정보

본문

There are at present no authorized non-programmer choices for utilizing non-public information (ie delicate, internal, or extremely delicate knowledge) with DeepSeek. As of now, we advocate using nomic-embed-textual content embeddings. To place it merely: AI fashions themselves are not a competitive advantage - now, it is all about AI-powered apps. DeepSeek fashions and their derivatives are all available for public obtain on Hugging Face, a distinguished site for sharing AI/ML models. For further safety, restrict use to devices whose entry to ship information to the general public internet is restricted. Setting aside the numerous irony of this claim, it's completely true that deepseek ai china integrated coaching information from OpenAI's o1 "reasoning" model, and certainly, this is clearly disclosed in the research paper that accompanied DeepSeek's launch. The analysis highlights how rapidly reinforcement learning is maturing as a discipline (recall how in 2013 the most spectacular thing RL may do was play Space Invaders). In the identical year, High-Flyer established High-Flyer AI which was dedicated to research on AI algorithms and its basic applications. Up until this level, High-Flyer produced returns that have been 20%-50% greater than stock-market benchmarks previously few years.

There are at present no authorized non-programmer choices for utilizing non-public information (ie delicate, internal, or extremely delicate knowledge) with DeepSeek. As of now, we advocate using nomic-embed-textual content embeddings. To place it merely: AI fashions themselves are not a competitive advantage - now, it is all about AI-powered apps. DeepSeek fashions and their derivatives are all available for public obtain on Hugging Face, a distinguished site for sharing AI/ML models. For further safety, restrict use to devices whose entry to ship information to the general public internet is restricted. Setting aside the numerous irony of this claim, it's completely true that deepseek ai china integrated coaching information from OpenAI's o1 "reasoning" model, and certainly, this is clearly disclosed in the research paper that accompanied DeepSeek's launch. The analysis highlights how rapidly reinforcement learning is maturing as a discipline (recall how in 2013 the most spectacular thing RL may do was play Space Invaders). In the identical year, High-Flyer established High-Flyer AI which was dedicated to research on AI algorithms and its basic applications. Up until this level, High-Flyer produced returns that have been 20%-50% greater than stock-market benchmarks previously few years.

In this framework, most compute-density operations are performed in FP8, while a number of key operations are strategically maintained in their unique information codecs to steadiness training effectivity and numerical stability. The cost of decentralization: An vital caveat to all of this is none of this comes without cost - training fashions in a distributed way comes with hits to the effectivity with which you gentle up every GPU throughout coaching. What makes DeepSeek so special is the corporate's claim that it was constructed at a fraction of the price of business-leading models like OpenAI - because it uses fewer advanced chips. OpenAI is an amazing business. Since launch, we’ve additionally gotten affirmation of the ChatBotArena rating that locations them in the highest 10 and over the likes of recent Gemini pro models, Grok 2, o1-mini, and so forth. With solely 37B active parameters, this is extremely appealing for a lot of enterprise applications. There’s some murkiness surrounding the type of chip used to prepare DeepSeek’s models, with some unsubstantiated claims stating that the company used A100 chips, which are at the moment banned from US export to China. Numerous export control legal guidelines lately have sought to restrict the sale of the very best-powered AI chips, akin to NVIDIA H100s, to China.

In this framework, most compute-density operations are performed in FP8, while a number of key operations are strategically maintained in their unique information codecs to steadiness training effectivity and numerical stability. The cost of decentralization: An vital caveat to all of this is none of this comes without cost - training fashions in a distributed way comes with hits to the effectivity with which you gentle up every GPU throughout coaching. What makes DeepSeek so special is the corporate's claim that it was constructed at a fraction of the price of business-leading models like OpenAI - because it uses fewer advanced chips. OpenAI is an amazing business. Since launch, we’ve additionally gotten affirmation of the ChatBotArena rating that locations them in the highest 10 and over the likes of recent Gemini pro models, Grok 2, o1-mini, and so forth. With solely 37B active parameters, this is extremely appealing for a lot of enterprise applications. There’s some murkiness surrounding the type of chip used to prepare DeepSeek’s models, with some unsubstantiated claims stating that the company used A100 chips, which are at the moment banned from US export to China. Numerous export control legal guidelines lately have sought to restrict the sale of the very best-powered AI chips, akin to NVIDIA H100s, to China.

DeepSeek says that their training only concerned older, much less highly effective NVIDIA chips, however that declare has been met with some skepticism. In line with unverified but commonly cited leaks, the coaching of ChatGPT-four required roughly 25,000 Nvidia A100 GPUs for 90-a hundred days. Although this great drop reportedly erased $21 billion from CEO Jensen Huang's personal wealth, it however solely returns NVIDIA stock to October 2024 levels, a sign of simply how meteoric the rise of AI investments has been. Schneider, Jordan (27 November 2024). "Deepseek: The Quiet Giant Leading China's AI Race". 2024), we investigate and set a Multi-Token Prediction (MTP) goal for DeepSeek-V3, which extends the prediction scope to a number of future tokens at every position. 5. They use an n-gram filter to eliminate take a look at knowledge from the practice set. Much has already been manufactured from the apparent plateauing of the "extra knowledge equals smarter models" approach to AI development. Conventional knowledge holds that giant language fashions like ChatGPT and DeepSeek have to be trained on more and more high-high quality, human-created text to enhance; DeepSeek took another approach. There’s no simple reply to any of this - everyone (myself included) needs to determine their own morality and approach here.

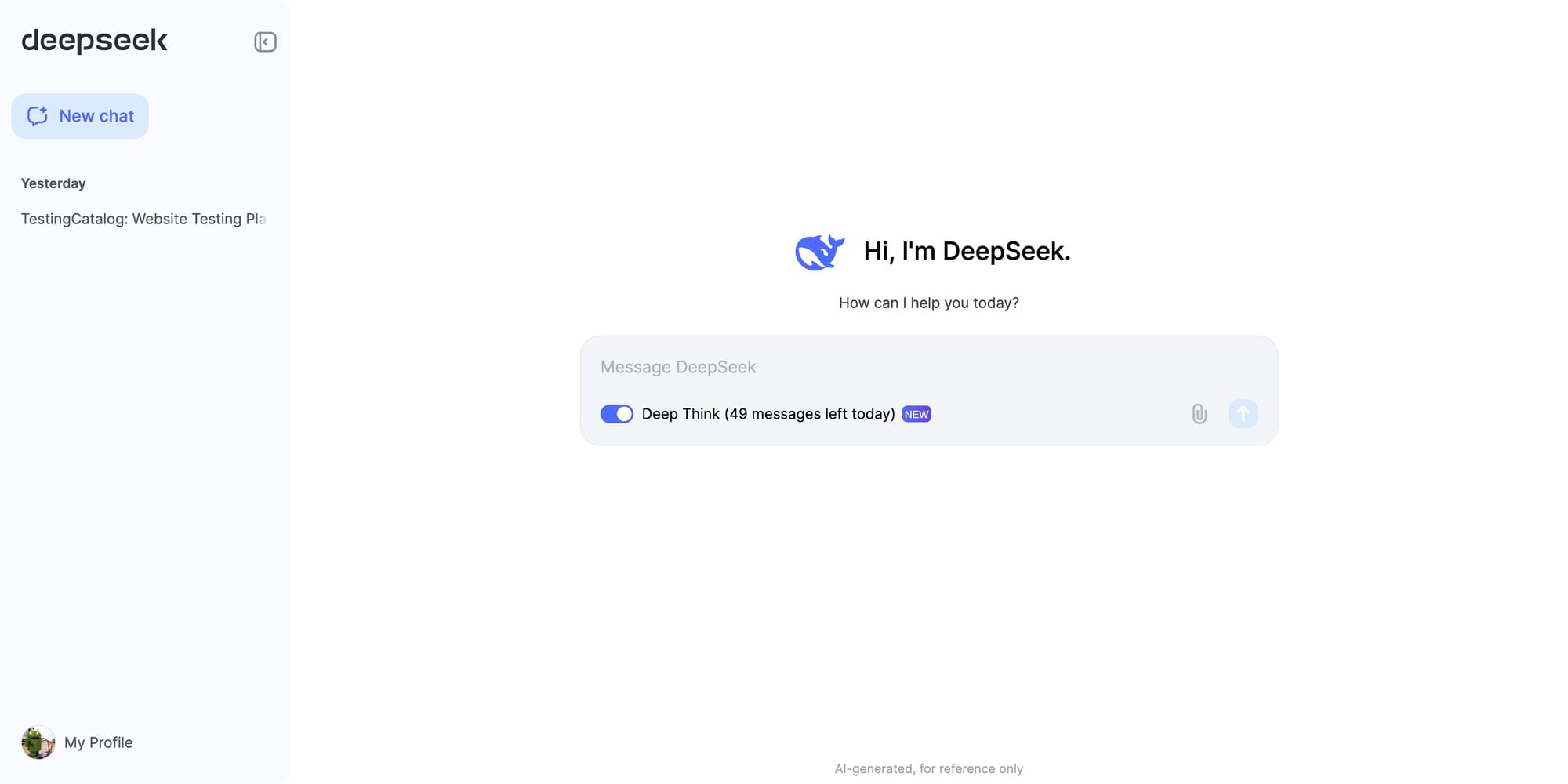

In the long term, what we're seeing here is the commoditization of foundational AI fashions. That’s far tougher - and with distributed training, these folks might practice models as effectively. After training, it was deployed on H800 clusters. They minimized the communication latency by overlapping extensively computation and communication, such as dedicating 20 streaming multiprocessors out of 132 per H800 for only inter-GPU communication. Communication bandwidth is a critical bottleneck in the coaching of MoE fashions. They lowered communication by rearranging (every 10 minutes) the exact machine each skilled was on so as to avoid certain machines being queried more typically than the others, adding auxiliary load-balancing losses to the training loss function, and other load-balancing methods. This repo figures out the most affordable accessible machine and hosts the ollama mannequin as a docker image on it. The models can then be run by yourself hardware using tools like ollama. The deepseek ai model that everyone seems to be using proper now is R1. Within the case of DeepSeek, sure biased responses are deliberately baked proper into the mannequin: as an illustration, it refuses to have interaction in any discussion of Tiananmen Square or different, fashionable controversies associated to the Chinese authorities. There are safer methods to attempt DeepSeek for each programmers and non-programmers alike.

If you have any questions pertaining to where and how to use ديب سيك, you can make contact with us at our internet site.

- 이전글Gambling Site Security: How Casino79's Scam Verification Ensures Safe Play 25.02.03

- 다음글What The 10 Most Stupid Ethanol Wall Fireplace-Related FAILS Of All Time Could Have Been Prevented 25.02.03

댓글목록

등록된 댓글이 없습니다.