DeepSeek: Cheap, Powerful Chinese aI for all. what might Possibly Go W…

페이지 정보

본문

DeepSeek is a complicated AI-powered platform designed for various functions, together with conversational AI, natural language processing, and text-based mostly searches. You want an AI that excels at inventive writing, nuanced language understanding, and complex reasoning tasks. DeepSeek AI has emerged as a significant participant in the AI panorama, significantly with its open-source Large Language Models (LLMs), together with the powerful DeepSeek-V2 and the highly anticipated DeepSeek-R1. Not all of DeepSeek's price-cutting methods are new either - some have been used in different LLMs. It seems probably that smaller corporations akin to DeepSeek could have a rising function to play in creating AI instruments which have the potential to make our lives simpler. Researchers might be using this info to analyze how the mannequin's already spectacular problem-solving capabilities can be even additional enhanced - enhancements which can be prone to end up in the subsequent era of AI models. Experimentation: A risk-free method to explore the capabilities of advanced AI models.

DeepSeek is a complicated AI-powered platform designed for various functions, together with conversational AI, natural language processing, and text-based mostly searches. You want an AI that excels at inventive writing, nuanced language understanding, and complex reasoning tasks. DeepSeek AI has emerged as a significant participant in the AI panorama, significantly with its open-source Large Language Models (LLMs), together with the powerful DeepSeek-V2 and the highly anticipated DeepSeek-R1. Not all of DeepSeek's price-cutting methods are new either - some have been used in different LLMs. It seems probably that smaller corporations akin to DeepSeek could have a rising function to play in creating AI instruments which have the potential to make our lives simpler. Researchers might be using this info to analyze how the mannequin's already spectacular problem-solving capabilities can be even additional enhanced - enhancements which can be prone to end up in the subsequent era of AI models. Experimentation: A risk-free method to explore the capabilities of advanced AI models.

The DeepSeek R1 framework incorporates superior reinforcement studying strategies, setting new benchmarks in AI reasoning capabilities. DeepSeek has even revealed its unsuccessful attempts at enhancing LLM reasoning by different technical approaches, reminiscent of Monte Carlo Tree Search, an method lengthy touted as a potential strategy to information the reasoning means of an LLM. The disruptive potential of its price-efficient, excessive-performing fashions has led to a broader dialog about open-source AI and its capability to challenge proprietary methods. We permit all fashions to output a maximum of 8192 tokens for every benchmark. Notably, Latenode advises in opposition to setting the max token limit in deepseek ai china Coder above 512. Tests have indicated that it may encounter points when handling more tokens. Finally, the training corpus for DeepSeek-V3 consists of 14.8T excessive-quality and diverse tokens in our tokenizer. Deep Seek Coder employs a deduplication process to make sure excessive-high quality training data, removing redundant code snippets and focusing on related knowledge. The company's privacy policy spells out all of the terrible practices it uses, akin to sharing your user data with Baidu search and transport all the things off to be saved in servers managed by the Chinese authorities.

The DeepSeek R1 framework incorporates superior reinforcement studying strategies, setting new benchmarks in AI reasoning capabilities. DeepSeek has even revealed its unsuccessful attempts at enhancing LLM reasoning by different technical approaches, reminiscent of Monte Carlo Tree Search, an method lengthy touted as a potential strategy to information the reasoning means of an LLM. The disruptive potential of its price-efficient, excessive-performing fashions has led to a broader dialog about open-source AI and its capability to challenge proprietary methods. We permit all fashions to output a maximum of 8192 tokens for every benchmark. Notably, Latenode advises in opposition to setting the max token limit in deepseek ai china Coder above 512. Tests have indicated that it may encounter points when handling more tokens. Finally, the training corpus for DeepSeek-V3 consists of 14.8T excessive-quality and diverse tokens in our tokenizer. Deep Seek Coder employs a deduplication process to make sure excessive-high quality training data, removing redundant code snippets and focusing on related knowledge. The company's privacy policy spells out all of the terrible practices it uses, akin to sharing your user data with Baidu search and transport all the things off to be saved in servers managed by the Chinese authorities.

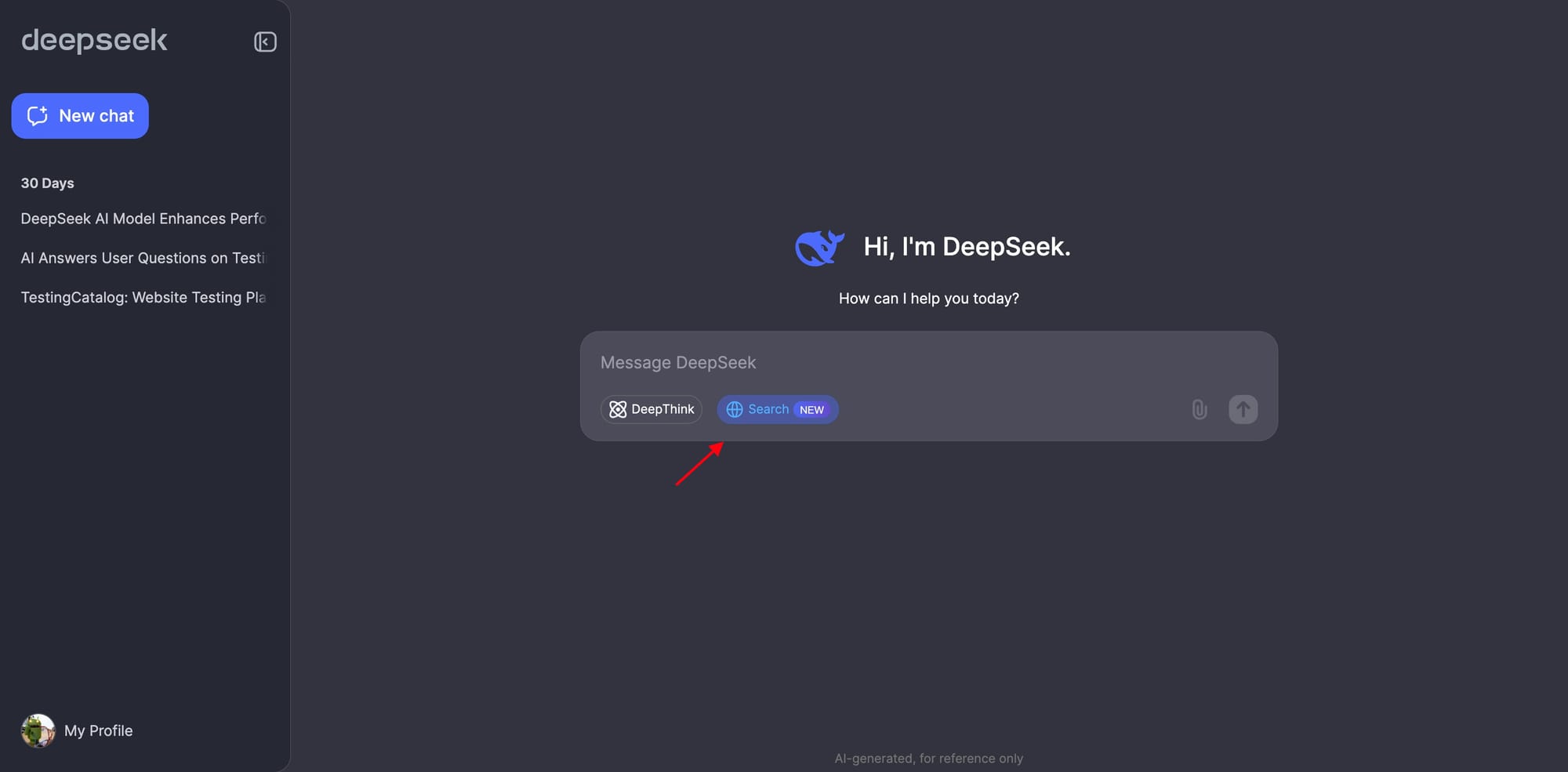

User Interface: Some users find DeepSeek's interface less intuitive than ChatGPT's. How it really works: The enviornment uses the Elo score system, just like chess rankings, to rank fashions based mostly on person votes. So, growing the efficiency of AI models can be a optimistic route for the trade from an environmental point of view. Organizations that utilize this mannequin acquire a big benefit by staying ahead of industry developments and meeting buyer calls for. President Donald Trump says this needs to be a "wake-up name" to the American AI business and that the White House is working to ensure American dominance stays in impact concerning AI. R1's base mannequin V3 reportedly required 2.788 million hours to prepare (running across many graphical processing items - GPUs - at the same time), at an estimated value of underneath $6m (£4.8m), in comparison with the more than $100m (£80m) that OpenAI boss Sam Altman says was required to practice GPT-4.

For instance, prompted in Mandarin, Gemini says that it’s Chinese company Baidu’s Wenxinyiyan chatbot. For example, it refuses to debate Tiananmen Square. Through the use of AI, NLP, and machine learning, it offers quicker, smarter, and extra helpful results. DeepSeek Chat: A conversational AI, much like ChatGPT, designed for a wide range of tasks, together with content creation, brainstorming, translation, and even code era. As an example, Nvidia’s market worth skilled a significant drop following the introduction of DeepSeek AI, as the necessity for in depth hardware investments decreased. This has led to claims of intellectual property theft from OpenAI, and the lack of billions in market cap for AI chipmaker Nvidia. Google, Microsoft, OpenAI, and META also do some very sketchy things by way of their mobile apps in the case of privateness, however they don't ship it all off to China. DeepSeek sends way more data from Americans to China than TikTok does, and it freely admits to this. Gives you a rough idea of some of their training knowledge distribution. For DeepSeek-V3, the communication overhead introduced by cross-node knowledgeable parallelism leads to an inefficient computation-to-communication ratio of roughly 1:1. To tackle this challenge, we design an innovative pipeline parallelism algorithm called DualPipe, which not only accelerates mannequin coaching by effectively overlapping ahead and backward computation-communication phases, but also reduces the pipeline bubbles.

- 이전글The Reasons To Focus On Improving Spare Car Key Cut 25.02.03

- 다음글The 10 Most Terrifying Things About Cot Sales 25.02.03

댓글목록

등록된 댓글이 없습니다.