Four Lessons About Deepseek It's Worthwhile to Learn Before You Hit Fo…

페이지 정보

본문

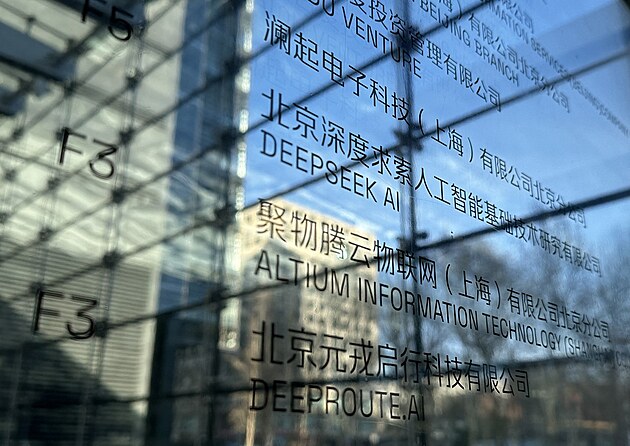

DeepSeek itself reported being hit with a major cyberattack last week. In March 2023, it was reported that high-Flyer was being sued by Shanghai Ruitian Investment LLC for hiring one of its employees. During decoding, we treat the shared expert as a routed one. From this perspective, each token will select 9 specialists during routing, where the shared professional is considered a heavy-load one that will all the time be selected. To ascertain our methodology, we start by developing an knowledgeable mannequin tailored to a selected domain, similar to code, arithmetic, or general reasoning, using a mixed Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) training pipeline. But this development may not necessarily be bad information for the likes of Nvidia in the long run: because the financial and time price of growing AI merchandise reduces, businesses and governments will be able to undertake this technology more simply. D is set to 1, i.e., in addition to the exact subsequent token, every token will predict one extra token. For the deployment of DeepSeek-V3, we set 32 redundant experts for the prefilling stage. To this finish, we introduce a deployment technique of redundant specialists, which duplicates high-load experts and deploys them redundantly.

While acknowledging its sturdy efficiency and cost-effectiveness, we additionally acknowledge that DeepSeek-V3 has some limitations, especially on the deployment. Through this two-part extension training, DeepSeek-V3 is able to dealing with inputs as much as 128K in length whereas maintaining strong performance. • Forwarding knowledge between the IB (InfiniBand) and NVLink domain while aggregating IB traffic destined for multiple GPUs within the same node from a single GPU. But there’s additionally the mixture of consultants or MoE method, where DeepSeek used multiple agents to formulate these LLM processes that make its source model work. DeepSeek’s highly-expert team of intelligence experts is made up of one of the best-of-the best and is well positioned for robust growth," commented Shana Harris, COO of Warschawski. The base model of DeepSeek-V3 is pretrained on a multilingual corpus with English and Chinese constituting the majority, so we evaluate its efficiency on a sequence of benchmarks primarily in English and Chinese, as well as on a multilingual benchmark. Qwen and DeepSeek are two representative mannequin series with strong assist for each Chinese and English. The corporate has two AMAC regulated subsidiaries, Zhejiang High-Flyer Asset Management Co., Ltd. Its authorized registration handle is in Ningbo, Zhejiang, and its principal workplace location is in Hangzhou, Zhejiang.

That's certainly one of the primary reasons why the U.S. Why do they take so much power to run? As for Chinese benchmarks, apart from CMMLU, a Chinese multi-topic multiple-choice activity, DeepSeek-V3-Base additionally exhibits better efficiency than Qwen2.5 72B. (3) Compared with LLaMA-3.1 405B Base, the most important open-source model with eleven instances the activated parameters, DeepSeek-V3-Base additionally exhibits significantly better performance on multilingual, code, and math benchmarks. 1) Compared with DeepSeek-V2-Base, because of the improvements in our mannequin structure, the scale-up of the mannequin dimension and training tokens, and the enhancement of data quality, DeepSeek AI-V3-Base achieves considerably higher performance as anticipated. Its coaching supposedly costs less than $6 million - a shockingly low figure when compared to the reported $one hundred million spent to practice ChatGPT's 4o model. POSTSUPERSCRIPT till the model consumes 10T training tokens. 0.Three for the primary 10T tokens, and to 0.1 for the remaining 4.8T tokens. We enable all models to output a maximum of 8192 tokens for each benchmark. Additionally, it's competitive towards frontier closed-supply fashions like GPT-4o and Claude-3.5-Sonnet. Rather than search to build extra price-effective and vitality-efficient LLMs, firms like OpenAI, Microsoft, Anthropic, and Google instead saw match to easily brute power the technology’s development by, within the American tradition, simply throwing absurd quantities of cash and sources at the problem.

DeepSeek just confirmed the world that none of that is definitely essential - that the "AI Boom" which has helped spur on the American economic system in latest months, and which has made GPU firms like Nvidia exponentially more wealthy than they were in October 2023, may be nothing more than a sham - and the nuclear energy "renaissance" along with it. Pricing - For publicly obtainable fashions like DeepSeek-R1, you're charged only the infrastructure price based on inference instance hours you select for Amazon Bedrock Markeplace, Amazon SageMaker JumpStart, and Amazon EC2. As well as, although the batch-wise load balancing methods show constant performance advantages, in addition they face two potential challenges in effectivity: (1) load imbalance within sure sequences or small batches, and (2) area-shift-induced load imbalance during inference. Fast inference from transformers by way of speculative decoding. Gpt3. int8 (): 8-bit matrix multiplication for transformers at scale. The technology behind such massive language fashions is so-called transformers. Fewer truncations enhance language modeling. Chinese simpleqa: A chinese factuality analysis for giant language models. As well as, on GPQA-Diamond, a PhD-degree evaluation testbed, DeepSeek-V3 achieves exceptional results, ranking simply behind Claude 3.5 Sonnet and outperforming all other opponents by a substantial margin.

DeepSeek just confirmed the world that none of that is definitely essential - that the "AI Boom" which has helped spur on the American economic system in latest months, and which has made GPU firms like Nvidia exponentially more wealthy than they were in October 2023, may be nothing more than a sham - and the nuclear energy "renaissance" along with it. Pricing - For publicly obtainable fashions like DeepSeek-R1, you're charged only the infrastructure price based on inference instance hours you select for Amazon Bedrock Markeplace, Amazon SageMaker JumpStart, and Amazon EC2. As well as, although the batch-wise load balancing methods show constant performance advantages, in addition they face two potential challenges in effectivity: (1) load imbalance within sure sequences or small batches, and (2) area-shift-induced load imbalance during inference. Fast inference from transformers by way of speculative decoding. Gpt3. int8 (): 8-bit matrix multiplication for transformers at scale. The technology behind such massive language fashions is so-called transformers. Fewer truncations enhance language modeling. Chinese simpleqa: A chinese factuality analysis for giant language models. As well as, on GPQA-Diamond, a PhD-degree evaluation testbed, DeepSeek-V3 achieves exceptional results, ranking simply behind Claude 3.5 Sonnet and outperforming all other opponents by a substantial margin.

If you have any type of concerns pertaining to where and the best ways to utilize ديب سيك, you could contact us at our own page.

- 이전글Replacement Window Glass Techniques to Simplify Your Daily Life Replacement Window Glass trick that every person should Learn 25.02.07

- 다음글This Story Behind Double Glazing Glass Replacement Can Haunt You Forever! 25.02.07

댓글목록

등록된 댓글이 없습니다.