Deepseek Works Solely Under These Circumstances

페이지 정보

본문

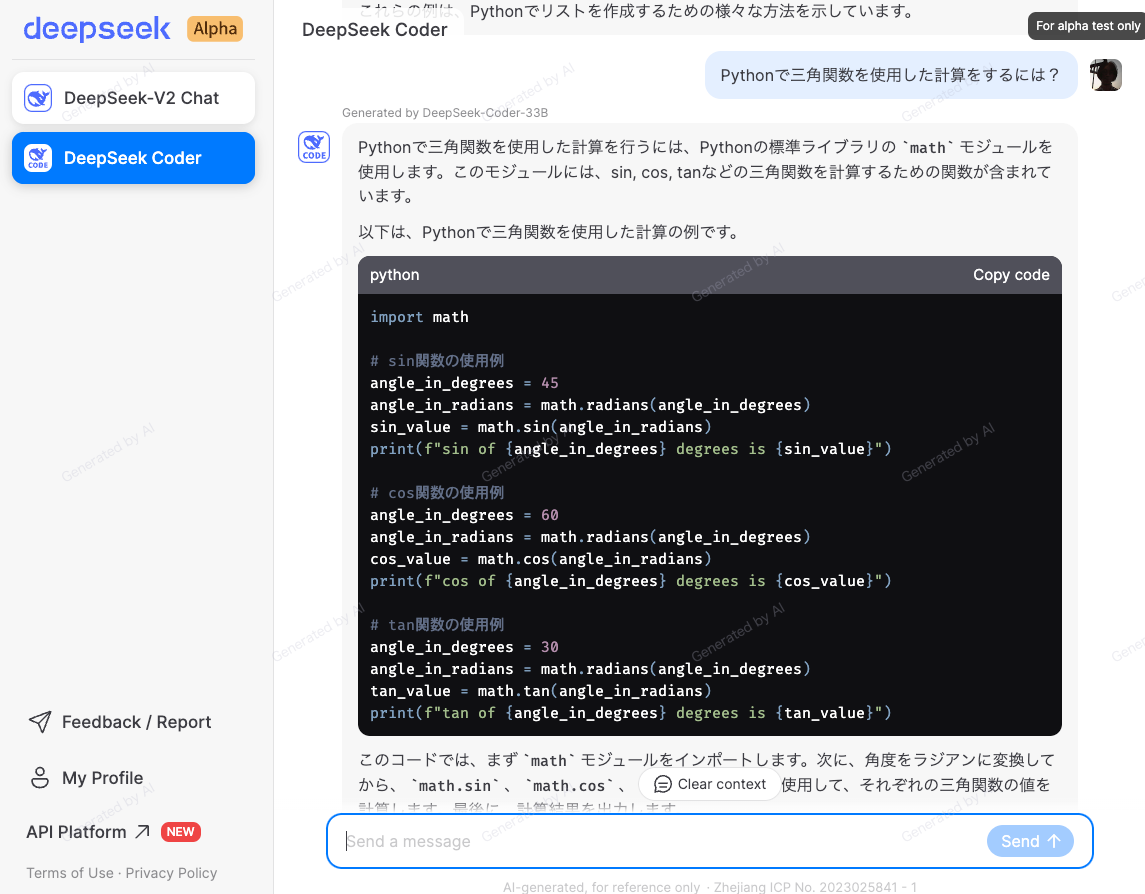

The US owned Open AI was the leader within the AI business, nevertheless it could be fascinating to see how issues unfold amid the twists and turns with the launch of the new satan in city Deepseek R-1. DeepSeek-R1, or R1, is an open supply language mannequin made by Chinese AI startup DeepSeek that may perform the identical textual content-primarily based tasks as different advanced fashions, however at a decrease value. Combination of these innovations helps DeepSeek-V2 achieve special options that make it even more aggressive among other open fashions than earlier versions. What's behind DeepSeek-Coder-V2, making it so special to beat GPT4-Turbo, Claude-3-Opus, Gemini-1.5-Pro, Llama-3-70B and Codestral in coding and math? Fill-In-The-Middle (FIM): One of many particular options of this model is its potential to fill in lacking components of code. The rule-based mostly reward mannequin was manually programmed. Multi-Head Latent Attention (MLA): In a Transformer, consideration mechanisms help the model give attention to probably the most relevant parts of the input.

The US owned Open AI was the leader within the AI business, nevertheless it could be fascinating to see how issues unfold amid the twists and turns with the launch of the new satan in city Deepseek R-1. DeepSeek-R1, or R1, is an open supply language mannequin made by Chinese AI startup DeepSeek that may perform the identical textual content-primarily based tasks as different advanced fashions, however at a decrease value. Combination of these innovations helps DeepSeek-V2 achieve special options that make it even more aggressive among other open fashions than earlier versions. What's behind DeepSeek-Coder-V2, making it so special to beat GPT4-Turbo, Claude-3-Opus, Gemini-1.5-Pro, Llama-3-70B and Codestral in coding and math? Fill-In-The-Middle (FIM): One of many particular options of this model is its potential to fill in lacking components of code. The rule-based mostly reward mannequin was manually programmed. Multi-Head Latent Attention (MLA): In a Transformer, consideration mechanisms help the model give attention to probably the most relevant parts of the input.

Fine-grained knowledgeable segmentation: DeepSeekMoE breaks down every professional into smaller, more targeted parts. However, such a posh large model with many involved parts still has several limitations. This allows the model to process info faster and with less reminiscence without losing accuracy. This approach permits fashions to handle totally different elements of data more successfully, enhancing efficiency and scalability in massive-scale duties. By implementing these methods, DeepSeekMoE enhances the efficiency of the mannequin, permitting it to carry out better than different MoE models, particularly when dealing with larger datasets. Traditional Mixture of Experts (MoE) architecture divides tasks amongst a number of knowledgeable models, choosing essentially the most related knowledgeable(s) for each enter utilizing a gating mechanism. U.S. lawmakers are taking steps to ban DeepSeek from authorities devices, which should sign to enterprises the inherent risks of utilizing the service. This method signifies the beginning of a new era in scientific discovery in machine learning: bringing the transformative benefits of AI agents to all the research strategy of AI itself, and taking us closer to a world the place limitless inexpensive creativity and innovation may be unleashed on the world’s most difficult problems. Conversely, the lesser professional can turn out to be better at predicting different kinds of input, and more and more pulled away into one other region.

The router is a mechanism that decides which expert (or consultants) ought to handle a specific piece of knowledge or job. When knowledge comes into the model, the router directs it to probably the most acceptable consultants primarily based on their specialization. Model dimension and architecture: The DeepSeek-Coder-V2 mannequin is available in two main sizes: a smaller model with sixteen B parameters and a bigger one with 236 B parameters. Its release comes simply days after DeepSeek made headlines with its R1 language model, which matched GPT-4's capabilities whereas costing simply $5 million to develop-sparking a heated debate about the present state of the AI industry. Adding more elaborate actual-world examples was one of our main goals since we launched DevQualityEval and this launch marks a major milestone towards this goal. With increasing competition, OpenAI would possibly add more superior features or launch some paywalled models without cost. Like Shawn Wang and i had been at a hackathon at OpenAI perhaps a 12 months and a half in the past, and they'd host an occasion of their workplace. MoE in DeepSeek-V2 works like DeepSeekMoE which we’ve explored earlier.

The router is a mechanism that decides which expert (or consultants) ought to handle a specific piece of knowledge or job. When knowledge comes into the model, the router directs it to probably the most acceptable consultants primarily based on their specialization. Model dimension and architecture: The DeepSeek-Coder-V2 mannequin is available in two main sizes: a smaller model with sixteen B parameters and a bigger one with 236 B parameters. Its release comes simply days after DeepSeek made headlines with its R1 language model, which matched GPT-4's capabilities whereas costing simply $5 million to develop-sparking a heated debate about the present state of the AI industry. Adding more elaborate actual-world examples was one of our main goals since we launched DevQualityEval and this launch marks a major milestone towards this goal. With increasing competition, OpenAI would possibly add more superior features or launch some paywalled models without cost. Like Shawn Wang and i had been at a hackathon at OpenAI perhaps a 12 months and a half in the past, and they'd host an occasion of their workplace. MoE in DeepSeek-V2 works like DeepSeekMoE which we’ve explored earlier.

Sophisticated architecture with Transformers, MoE and MLA. Risk of dropping information whereas compressing information in MLA. Risk of biases because DeepSeek-V2 is educated on vast amounts of information from the internet. Mixture-of-Experts (MoE): Instead of utilizing all 236 billion parameters for every process, DeepSeek-V2 only activates a portion (21 billion) based mostly on what it must do. DeepSeek AI-Coder-V2, an open-supply Mixture-of-Experts (MoE) code language model. Please observe that the usage of this mannequin is topic to the terms outlined in License part. High-Flyer stated that its AI models did not time trades nicely though its inventory selection was superb in terms of long-time period worth. Actually, Nvidia's market loss following the launch of DeepSeek's giant language mannequin (LLM) marks the best one-day inventory market drop in history, says Forbes. DeepSeek-V2 is a state-of-the-art language mannequin that makes use of a Transformer architecture combined with an revolutionary MoE system and a specialized attention mechanism referred to as Multi-Head Latent Attention (MLA). Sparse computation because of usage of MoE. DeepSeekMoE is a sophisticated version of the MoE structure designed to improve how LLMs handle complicated tasks. As mentioned earlier, Solidity assist in LLMs is commonly an afterthought and there's a dearth of coaching information (as in comparison with, say, Python).

If you liked this posting and you would like to acquire extra details pertaining to شات ديب سيك kindly visit our own web page.

- 이전글10 No-Fuss Methods To Figuring Out The Grey Corner Chesterfield Sofa In Your Body. 25.02.09

- 다음글Cat Flap Cost Near Me 25.02.09

댓글목록

등록된 댓글이 없습니다.